by Simone Onofri (W3C) and Giovanni Corti (FBK)

W3C has published a Threat Modeling Guide that expands security analysis to include societal impacts and promotes the publication of reusable, public threat models, aligning threat modeling with Open Science principles of transparency, reuse, and accountability.

Threat modeling is commonly described as a structured practice for defining a system, identifying threats and potential attacks, selecting responses to address them, and iterating as the system evolves [1]. In standardization, the system is often the specification, and how it is implemented and deployed in the environment. When a specification forms part of the foundation of a digital infrastructure, the threat model should not only capture threats to the system but also threats generated by the system for people, communities, and the environment. This perspective aligns with Open Science by emphasising transparency in design decisions and making the societal impacts of digital infrastructure visible and accountable.

From a system-centric to a human-centric threat modeling

The Threat Modeling Guide integrates the stakeholder perspective. After the classic first step, which answers the question “what are we working on” [2], and then after modeling the system, it also asks “who is impacted” by the system, conducting a stakeholder impact analysis, where we consider not only the users of the system, but also those who are not users, for example because they may have been excluded by the design of the system itself. The goal of this step is to identify these stakeholders to discuss design trade-offs as early as possible and provide mitigations at the standard level. By making stakeholder impacts explicit, this approach supports more transparent and inclusive design processes, which are central to Open Science practices.

From threats to the system to threats to humans

After identifying the system and its stakeholders, the next step is still “what can go wrong,” but with a broader scope: to understand both threats to the system and threats generated by the system for its stakeholders. This is a crucial phase and brings a well-known challenge in security threat modeling: developers with expertise in the system may lack security expertise, while security experts knowledgeable about threats and impacts may lack expertise in the specific system.

This challenge is even more pronounced when the terminology and the vocabulary used to identify societal impacts, which are expressed using aspirational or symbolic language (e.g., fairness, dignity, non-discrimination), must be translated into concrete technical and governance levers.

Figure 1: A model to illustrate discriminatory vetting/processes based on individual characteristics.

To overcome this challenge, threat modeling practices are complemented by facilitation techniques, such as serious games, including card games, to assist in threat elicitation. For example, in addition to using documentation such as the “Self-Review Questionnaire: Security and Privacy” to identify issues, the W3C community also uses STRIDE cards for security, and LINDDUN cards for privacy, which are promising tools for helping even those unfamiliar with specific threats identify them. Addressing this gap is essential not only for effective security design but also for ensuring that knowledge about risks and impacts can be shared and understood across communities.

Bridging vocabularies with LEGO SERIOUS PLAY workshop

Digital identities, particularly those issued by governments, represent a high-impact societal use case, as they can serve as a technology of inclusion or exclusion or promote surveillance.

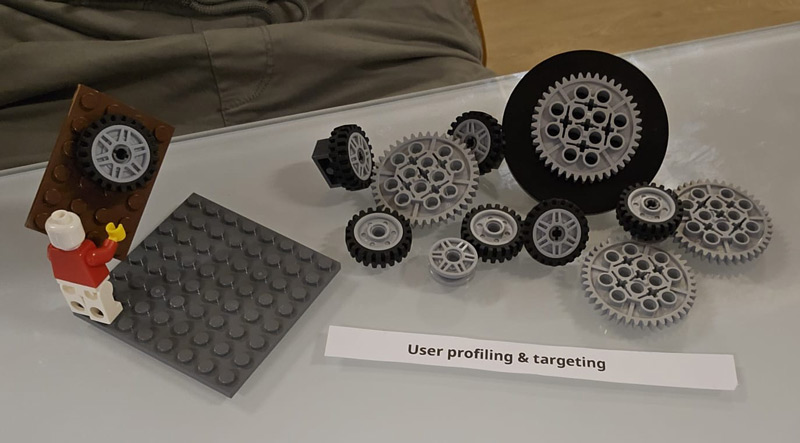

In October 2025, W3C experimented an approach that was deliberately not limited to textual discussions: a facilitated workshop with LEGO® SERIOUS PLAY® (LSP) [L1]. The objective was to help participants make abstract and societal impacts more concrete.

During the workshop, LSP was used as a facilitation tool for the Threat Modeling session. Starting with the harm taxonomy proposed in “Enhancing National Digital Identity Systems” [3], participants were asked to build a physical, metaphorical representation of the derived threats using LEGO bricks, thereby creating a threat landscape and connecting the various threats. The three-dimensional models served as boundary objects: they enabled participants with different professional and cultural vocabularies to converge on a common understanding of what an abstract harm is, how it manifests, and which technical or governance assumptions enable it.

Figure 2: A model to illustrate user profiling and targeting.

Why public threat models matter

A final element emphasised by the guide is the publication of the threat model, a practice closely aligned with Open Science. Non-public threat models create knowledge asymmetries and limit reuse; public threat models make assumptions visible, clarify trade-offs, and expose the boundaries of responsibility among specifications, implementations, deployments, and governance, providing an element of accountability. In this way, public threat models function as open research artefacts that can be inspected, reused, and built upon by a wider community.

From a purely research perspective, having public, open threat models make them reusable knowledge artifacts. They can be compared with others, extended by other standardization bodies or policymakers, and studied longitudinally as technologies evolve. In this sense, human-centered threat modeling becomes not only an engineering practice but also a means of analyzing the societal impact of technology. In this sense, human-centered threat modeling becomes not only an engineering practice but also a contribution to Open Science, enabling transparent, reusable, and comparable knowledge about the societal impact of technology.

Links:

[L1] https://www.w3.org/blog/2025/threat-modeling-with-lego-serious-play-building-your-digital-identity-threat/

[L2] https://www.ohchr.org/en/documents/tools-and-resources/tech-and-human-rights-study-making-technical-standards-work-humanity

[L3] https://digitallibrary.un.org/record/4031373?ln=en&v=pdf

[L4] https://onlinelibrary.wiley.com/doi/book/10.1002/9781119183631

References:

[1] World Wide Web Consortium (2026) Threat modeling guide. Group Note Draft http://w3.org/TR/threat-modeling-guide/

[2] Shostack, A. (2014). Threat modeling: Designing for security. John Wiley & Sons

[3] G. Corti, G. Sassetti, A. Sharif, R. Carbone, and S. Ranise, “Enhancing National Digital Identity Systems: A Framework for Institutional and Technical Harm Prevention Inspired by Microsoft’s Harms Modeling,” in Proc. 22nd Int. Conf. on Security and Cryptography (SECRYPT 2025), 2025, pp. 723–728.

Please contact:

Simone Onorfi, W3C