by Rasmus Adler (Fraunhofer IESE) and Patrik Feth (SICK AG)

Machines in an Industry 4.0 context need to behave safely but smartly. This means that these smart machines need to continue to precisely estimate the current risk and not shut down or degrade unnecessarily. To enable smart safe behaviour, Fraunhofer IESE is developing new safety assurance concepts. SICK supports related safety standards to implement these concepts in an industrial setting.

Smart machines need to behave safely in a smart way, predicting accidents and adapting their behaviour to avoid them, while efficiently fulfilling their original mission. The prediction of possible accidents is a challenging task. It requires perceiving the current situation and anticipating how it will evolve over time. For safety reasons, any uncertainty in this dynamic risk management must be compensated by a worst-case assumption. This limits the performance significantly. For instance, if a smart machine is not sure about the behaviour of another smart machine, it must assume the most critical behaviour and behave very cautiously even in situations where it is not necessary.

A promising approach for maximising performance while ensuring safety is cooperative risk management. If all smart machines, regardless of the manufacturer of the machine, shared their information about the current situation and its evolution, we could minimise the number of worst-case assumptions. However, the claims that each individual machine makes about the situation and its evolution could be wrong. Thus, Fraunhofer IESE proposes extending these claims to a machine-readable assurance case capturing the underlying reasoning and assumptions.

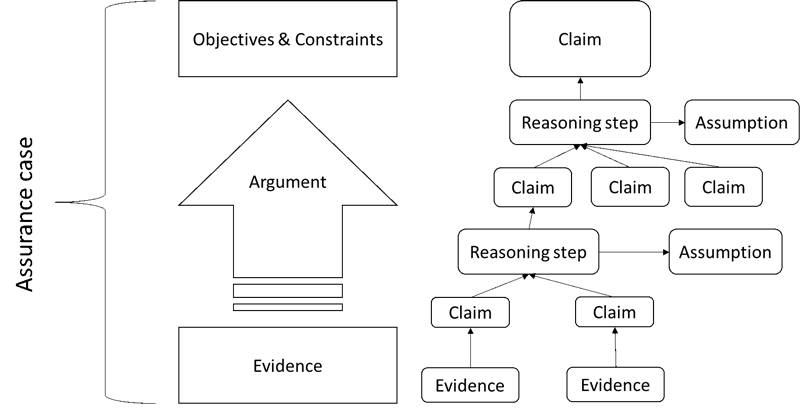

Figure 1: Illustration of an assurance case.

As shown in the left part of Figure 1, an assurance case is a reasoned and compelling argument, supported by evidence such as test results, that an entity achieves its objectives and constraints.

As shown in the right part of Figure 1, the argument generally consists of several reasoning steps. Each step states that a higher-level claim is true if some sub-claims are true and some assumptions hold.

The idea behind machine-readable assurance cases is to enable runtime evaluations of the assumptions used in the argumentation. A smart machine can evaluate if the assumptions used in the assurance case received from another smart machine hold in the current usage scenario. For instance, if the provided information is only correct if a temperature is within a certain range, then a smart machine receiving this information can check if the temperature is currently within this range. Furthermore, a smart machine may evaluate the assumptions with respect to the required integrity. If there are too many arguable assumptions, then the smart machine might decide that the integrity is insufficient.

Fraunhofer IESE is developing solutions for implementing this idea in the DEIS project [L1]. In this project, we use the term Digital Dependability Identity (DDI) for all the information that uniquely describes the dependability characteristics of a system or component [1]. We chose SACM [L2] as the basis for describing DDIs, because SACM is the meta-model of assurance case languages.

However, the concept of ensuring safety through machine-readable assurance cases is in conflict with traditional standards in the domain of industrial automation. In this domain, it is common to safeguard a critical application by using dedicated protective devices, mainly sensor devices with a fixed data evaluation function, that provide a certain safety function with a guaranteed level of integrity; e.g., a safety laser scanner detecting an intrusion into a preconfigured safety field. These devices and their provided functions are conformant to harmonised standards such as IEC 61496-3. Such standards describe for a specific technology – for example in the case of IEC 61496-3, active opto-electronic protective devices responsive to diffuse reflection – very precise requirements for achieving a particular integrity level.

In European countries, the use of such devices is heavily stimulated as the Machine Directive explicitly covers protective devices designed to detect the presence of persons. Along with the existence of specific harmonised standards, this has fostered the belief in the domain of industrial automation that safety equals conformance with such standards. This limits the possibilities for building safe systems, as it constrains the options for reducing the risk of a critical application to existing standardized protective devices. In particular, it hinders the implementation of the above-mentioned cooperative risk management.

To overcome this limitation, SICK supports the recently published technical specification IEC TS 62998-1. This technical specification gives guidance for the development of safety functions beyond existing sensor-specific standards such as IEC 61496-3. It facilitates the use of new sensor technologies (e.g., radar, ultrasonic sensors), new kinds of sensor functions (e.g., classification of objects, position of an object), combinations of different sensor technologies in a sensor system, and usage under new conditions (e.g., outdoor applications). To this end, IEC TS 62998-1 requires analysing the capabilities of a sensor used in the intended application. A system realising cooperative risk management can be considered as a safety-related sensor system with a respective safety function in this new technical specification.

To support future Industry 4.0 applications, sensor models could incorporate the information required by IEC TS 62998-1 and could become part of a machine-readable assurance case of the device. With this information stored in the assurance case, it would become possible to use the device with its provided sensor functions in critical applications unforeseen during the initial development of the device.

Links:

[L1] http://www.deis-project.eu/home/

[L2] https://kwz.me/hEb

Reference:

[1] E. Armengaud et al.: “DEIS: Dependability Engineering Innovation for Industrial CPS”, in: C. Zachäus, B. Müller, G. Meyer (eds): ‘Advanced Microsystems for Automotive Applications 2017’, Lecture Notes in Mobility. Springer, 2018.

Please contact:

Rasmus Adler

Fraunhofer Institute for Experimental Software Engineering IESE, Germany

Patrik Feth

SICK AG, Germany