by Patrizia Ribino and Carmelo Lodato (ICAR-CNR)

Human interactions are fundamentally based on normative principles. Particularly in social contexts, human behaviours are affected by social norms. Individuals expect certain behaviours from other people, who are perceived to have an obligation to act according to the expected behaviour. Giving robots the ability to interact with humans, on human terms, is an open challenge. People are more willing to accept robotic systems in daily life when the robots engage in socially desirable behaviours with benevolent interaction styles. Furthermore, allowing robots to reason in social situations, which involve a set of social norms generating expectations, may improve the dynamics of human-robot interactions and the self-evaluation processes of robot’s behaviours.

Human-robot social interactions play an essential role in extending the use of robots in daily life. It is widely discussed that, to be social, robots need the ability to engage in interactions with humans based on the same principles as humans do. Cognitive and social sciences assess that human interactions are fundamentally based on normative principles. Most human interactions are influenced by deep social and cultural standards, known as social norms [1]. Social norms are behavioural expressions of abstract social values (e.g., politeness and honesty) that underlie the preferences of a community. Social norms guide human behaviours and generate expectations of compliance that are considered to be legitimate. An open challenge is how to incorporate norm processing into robotic architectures [2].

At the Cognitive Robotics and Social SensingLab at ICAR-CNR [L1], we are working on a normative reasoning approach that takes advantage of goal-orientation, using high-level abstractions to implement appropriate algorithms that allow social robots to proactively reason about dynamic normative situations. A tuple of mental concepts is the grounding of our normative reasoning, such as the state of the world, goals, capabilities, qualitative goals, social norms, and expectations. In particular, a state of the world represents conditions or set of circumstances in which a robot operates at a specific time. A goal describes a desired state of affairs a robot wants to achieve. Capabilities are abstract descriptions about abilities of a robot that can be used to reach its objectives. A qualitative goal is a goal for which satisfaction criteria are not defined in a clear-cut way. It allows us to model the pursuit of a social value that cannot be described in terms of a clear condition to be reached. A social robot continuously performs actions that give positive contributions to sustaining that social value. The actions to be performed are prescribed by the social norms. They are defined using desirability operators that represent preferences about acceptable behaviours. Let us consider a society where politeness is considered a social value to be pursed, then the norm “it is desirable that a guy gives up his seat if an elderly person is standing up” prescribes an acceptable behaviour within the community. Finally, the Expectations are motivators for pursuing social values. Just like a human, a robot may comply with a social norm in the presence of relevant expectations, but it may decide not to follow the norm in the absence of such expectations, thus reviewing its beliefs.

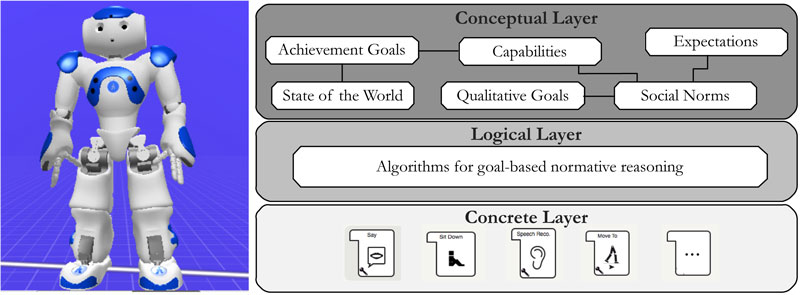

Figure 1 shows the architectural schema we implemented for incorporating norm reasoning into a robotic framework. The conceptual layer is responsible for manifesting a uniform way of representing the mental concepts that are the basis for the reasoning process. It uses AgentSpeak, a powerful programming language for building rational agents based on the belief-desire-intention paradigm.

Figure 1: Architectural schema for incorporating norm reasoning into robotic framework.

The logical layer provides high-level decision making based on declarative knowledge coming from the conceptual level. It consists of a set of components for normative reasoning, goal deliberation and means-end reasoning implemented in Jason. Its outcome is a declarative representation of tasks a robot has to perform to fulfil the deliberated goal according to the context it is operating.

Finally, the concrete layer provides the procedural knowledge for performing declarative tasks coming from the upper level. It consists of a set of Python modules that implement concrete tasks a robot may perform.

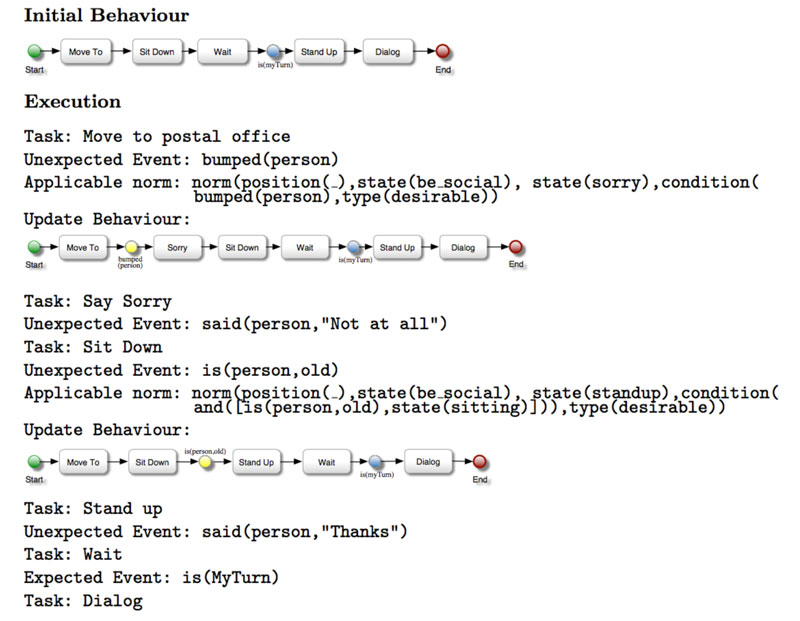

Figure 2 shows a scenario that involves social norms. A robot is committed to taking a packet at a post office. It knows that it is desirable to offer a polite greeting when s(he) meets someone (N1), to say “I’m sorry” if it bumps into someone (N2) and to be kind to the elderly, giving up own seat (N3). The first workflow in Figure 2 represents the initial behaviour orchestrated by the robot for reaching its goal. At the post office, it bumps into someone, an event that triggers the norm N2. Thus the robot changes its planned behaviour by performing the task for apologising. Then, when it arrives at the post office, it sees a free chair, and sits down. A senior arrives at the postal office. The robot changes its plan by following the norm N3.

Figure 2: The robot dynamically assumes the most suitable behaviour according to different social situations performing the actions prescribed by social norms.

Our approach allows robots to dynamically assume the most suitable behaviour according to different social situations by changing the generated plan by introducing/deleting desirable/undesirable actions prescribed by social norms.

Link:

[L1] https://kwz.me/htr

References:

[1] C.Bicchieri and R. Muldoon: “Social norms”, in Edward N. Zalta, (ed.), The Stanford Encyclopedia of Philosophy. Metaphysics Research Lab, Stanford University, spring 2014 ed., 2014.

[2] B. F Malle, M. Scheutz, and J. L Austerweil: “Networks of social and moral norms in human and robot agents”, in A world with robots, pages 3–17, 2017.

Please contact:

Patrizia Ribino, ICAR-CNR, Italy

+39 091 8031069