by Lucile Sassatelli (Université Côte d'Azur, CNRS, I3S) and Hui-Yin Wu (Université Côte d'Azur, Inria)

Designing AI models to help analyse film corpora by detecting character objectification, or "male gaze"? Building an interdisciplinary synergy between three laboratories in social sciences and three laboratories in computer science, this is the endeavor of the TRACTIVE research project [L1] we present in this article.

Characterizing and quantifying gender representation disparities in audiovisual storytelling can help us understand how stereotypes are perpetuated on screen, through the media we consume daily. We present the multidisciplinary project TRACTIVE where we introduce a new task to the AI community: detecting whether characters in ovie clips are objectified, broadly defined as a character being portrayed as an object of desire or service, rather than the subject of action. This task poses an interesting multimedia challenge, as objectification is characterised by complex multimodal (visual, speech, audio) temporal patterns. We exemplify this through the creation of a video dataset with fine-grained annotations of instances of objectification, and the careful design of explainable and multimodal models to detect them.

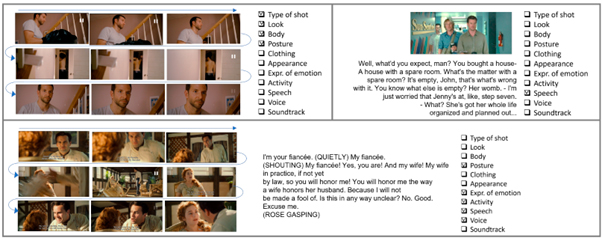

The first step of the project was therefore to create data: we introduced the Multimodal Objectifying Gaze (MObyGaze) dataset to the community. This dataset includes 20 films annotated in detail with instances and elements of objectification. Objectification is both a social and psychological construct that is manifested on-screen by a combination of cinematic techniques (camera position, angle, movement), iconographic choices (visible body parts, clothing, looks, etc.), narrative (speech, in the form of textual transcripts) and auditory components (voice and soundtrack). To incorporate these complex modalities into our annotations, we devised a thesaurus of objectification based on these elements by building on existing characterization in film studies and cognitive and social psychology. These elements produced 11 formalised concepts spanning three modalities (visual, textual, and audio). We selected 20 films from the MovieGraphs dataset, a pre-existing dataset in the computer vision community, to be annotated by human experts. The annotation process involves first delimiting relevant segments for annotation, and for each segment, assigning a label for the level of objectification (which corresponds to the annotator’s level of confidence in whether the clip depicts a character being objectified) along with which the concepts that are present and explain their annotation. Experts used an annotation tool designed specifically for this purpose that incorporates the thesaurus and allows easy sharing and visualisation of these annotations between annotators. The resulting MObyGaze dataset comprises 5,783 video clips. The Figure 1 shows three instances that were annotated by the experts with the maximum level of objectification, showing the diverse modalities that can manifest objectification. We verify the validity of the produced data with annotator agreement measure for both unitisation and categorisation. The resulting dataset comprises 5,783 segments over 43 hours of footage, each annotated by two experts [1, L2].

Figure 1: Examples of segments tagged with a “Sure” level of objectification. Top left: vision modality only. Top right: text modality only. Bottom: multimodal vision, text, and audio concepts producing objectification.

The second step is to explore the design of models best adapted to learn from this unique dataset, tying multimodal explanations to an interpretive task label. Objectification here constitutes an interpretative notion with inherently subjective components, which makes the task and associated benchmark very challenging in several regards, including: (i) concepts (e.g., combination of look and camera positioning) are themselves interpretative, hence more subtle and difficult to detect than, e.g., a yellow beak; (ii) uneven contribution of concepts to the overall objectification label (visual concepts appear more often than auditory ones), which complicates the choice of modality fusion in models. We follow two directions: improving multimodal fusion for trustworthiness, and designing explainable models for such a challenging video interpretation task. Given the complex nature of the task, it is indeed crucial that deep learning solutions be understandable and verifiable by social scientists.

On the one hand, we first show that supervision with concept information enables us to design late-fusion models that achieve performance comparable to early fusion, while being more conducive to explainability. Second, we study how to design models with both high task accuracy and modality trustworthiness. That is, models must not only make accurate predictions, but make accurate predictions for the right reasons (i.e., flagging the right modalities yielding a positive label). We show that specific strategies for fusing atomic models supervised with concepts and trained on modality ensembles achieve advantageous trade-offs between task accuracy and trustworthiness [2].

On the other hand, we study how to design effective explainable models for objectification detection. We show that existing prominent models such as Concept Embedding Models yield poor results on our complex video dataset, compared to legacy usage for, e.g., image recognition for bird species. We conduct an analysis, identifying causes in both the greater difficulty of detecting concepts and the weaker determination of the task by the concepts. We show that building explainable models for this kind of task requires revisiting some of the assumptions commonly made when working with usually larger datasets, and with less interpretative concepts (handling concept noise and allowing raw information to flow to the final classifier) [3].

To conclude, we believe our contributions within the TRACTIVE project represent valuable steps towards advancing computational approaches to help make subtle patterns of bias in audiovisual content visible and more tangible, and quantify their prevalence. Our ongoing works on multimodal and explainable models show that rich per-modality annotations of moderate-size datasets can help design more trustworthy models, essential for applications such as supporting social scientists in analyzing complex social constructs.

Links:

[L1] https://webcms.i3s.unice.fr/TRACTIVE/

[L2] https://anonymous.4open.science/r/MObyGaze-F600/Readme.md

References:

[1] J. Tores, et al., “Visual objectification in films: Towards a new AI task for video interpretation,” in Proc. IEEE Conf. Comput. Vis. Pattern Recognit. (CVPR), Seattle, WA, USA, 2024.

[2] E. Ancarani, et al., “Leveraging concept annotations for trustworthy multimodal video interpretation through modality specialization,” in Proc. ACM Int. Conf. Multimedia Workshops, Dublin, Ireland, 2025.

[3] J. Tores, et al., “Improving concept-based models for challenging video interpretation,” in Proc. ACM Int. Conf. Multimedia, Dublin, Ireland, 2025.

Please contact:

Lucile Sassatelli

Université Côte d'Azur, CNRS, I3S, France