by Pierre Alliez (Inria), François Forge (Reciproque), Livio de Luca (CNRS MAP), Marc Pierrot-Deseilligny (IGN) and Marius Preda (Telecom SudParis)

One of the limitations of the 3D digitisation process is that it typically requires highly specialised skills and yields heterogeneous results depending on proprietary software solutions and trial-and-error practices. The main objective of Culture 3D Cloud [L1], a collaborative project funded within the framework of the French “Investissements d’Avenir” programme, is to overcome this limitation, providing the cultural community with a novel image-based modelling service for 3D digitisation of cultural artefacts. This will be achieved by leveraging the widespread expert knowledge of digital photography in the cultural arena to enable cultural heritage practitioners to perform routine 3D digitisation via photo-modelling. Cloud computing was chosen for its capability to offer high computing resources at reasonable cost, scalable storage via continuously growing virtual containers, multi-support diffusion via remote rendering and efficient deployment of releases.

Platform

The platform is designed to be versatile in terms of scale and typologies of artefacts to be digitised, scalable in terms of storage, sharing and visualisation, and able to generate high-definition and accurate 3D models as output. The platform has been implemented from modular open-source software solutions [L2, L3 1, 2, 3]. The current cloud-based platform hosted by TGIR HumaNum [L4] enables four simultaneous users in the form of affordable high performance computing services (8 cores, 64GB of RAM and 80 GB of disk).

Pipeline

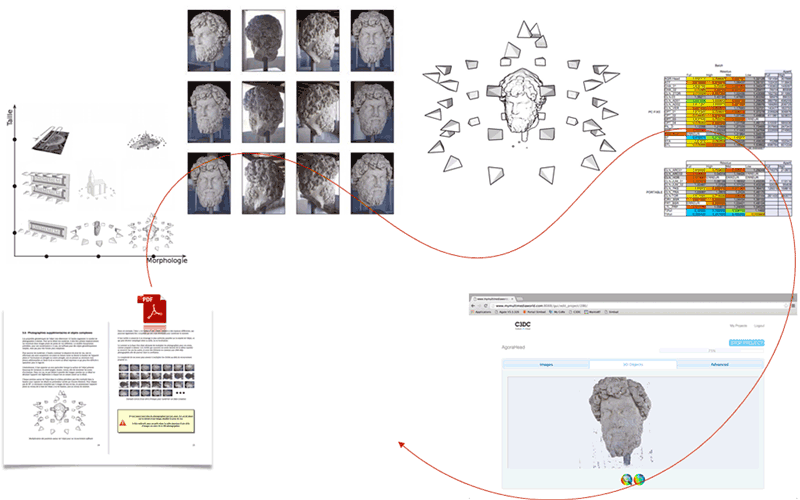

The photo-modelling web service offered by the platform implements a modular pipeline with various options depending on the user mode that ranges from novice to expert (Figure 1). The minimum requirement is to conform to several requirements during acquisition: A protocol specific to each type of artefact (statue, façade, building, interior of building) and sufficient overlap between the photos. Image settings such as EXIF, exposure and white-balance can be adjusted or read from the image metadata. The image sequence can be organised into a linear, circular or random sequence. Camera calibration is performed automatically and a dense photogrammetry approach based on image matching is performed to generate a dense 3D point set with colour attributes. For the artefact shown in Figure 1 the Micmac software solution [2] generates a 14M point set from 26 photos. The output point set can be cleaned up from outliers and simplified, and a Delaunay-based surface reconstruction method turns it into a dense surface triangle mesh with colour attributes.

Figure 1:Figure 1: The photo-modelling process requires implementing a well-documented acquisition protocol specific to each type of data, taking a series of high-definition photos, specifying the type of acquisition (linear, circular, random), performing camera calibration (locations and orientations), generating a dense 3D point set with colour attributes via dense image matching and reconstructing a surface triangle mesh.

We plan to improve the platform in order to deal with series of multifocal photos, fisheye devices, and photos acquired by unmanned aerial vehicles (drones).

Links:

[L1] http://c3dc.fr/

[L2] http://logiciels.ign.fr/?Micmac

[L3] https://www.cgal.org/ (see components “Point set processing” and “Surface reconstruction”

[L4] http://www.huma-num.fr/

References:

[1] E. RupnikEmail, M. Daakir and M. Pierrot-Deseilligny: “MicMac – a free, open-source solution for photogrammetry”, Open Geospatial Data, Software and Standards 2017.

[2] T. van Lankveld: “Scale-Space Surface Reconstruction”, in CGAL User and Reference Manual. CGAL Editorial Board, 4.10.1 edition, 2017.

[3] P. Alliez, et al: “Point Set Processing”, in CGAL User and Reference Manual. CGAL Editorial Board, 4.10.1 edition, 2017.

Please contact:

Pierre Alliez, Inria, France,

Livio de Luca, CNRS, France,