by Sagar Dolas (SURF), Ana Verbanescu (Twente University) and Benjamin Czaja (SURF)

Energy is an emerging topic in the scientific computing ecosystem and is becoming a design point for the future of research. Science relies increasingly on digital research computing as a tool for analysis and experimentation. Exponential increase in demand for computing means that classically designed ICT infrastructure will soon become unsustainable in terms of its energy footprint [1]. We need to experiment with energy-efficient methods, tools, and algorithms and hardware technologies. In the Netherlands, we are working towards zero energy waste for high performance computing (HPC) applications on the national supercomputer “Snellius”. It involves discussing challenges, proposing new research directions, finding opportunities to engage the user community, and taking steps for responsible use of software in research.

Traditionally, supercomputing focuses on improving latency or throughput, which are of massive importance for applications such as drug discovery or climate simulations. For many decades we developed infrastructure, algorithms, and software tools to obtain improvements. Given the rapid increase in energy usage for ICT services, further emphasised by the imminent energy crisis, it is a priority to understand and optimise the energy consumption of research computing applications [2].

Specifically, our initiative is about working with three stakeholders that need to collaborate to reduce the energy impact: application developers, system integrators, and system operators:

- Application developers are responsible for improving the energy efficiency of their own code, making use of algorithmic, programming, and hardware tools at their disposal. Ideally, applications should be able to adapt to the available system resources and use them effectively. Research into programming models and tools that enable such flexibility is accelerating.

- System integrators are responsible for offering the right resources for the application developers and system operators. These resources must include efficient hardware – e.g., different GPGPUs, CPUs (Central processing unit), or even FPGAs (Field Programmable Gate array) – to enable different application mixes. Research into procuring systems and provisioning applications with the right resources is mandatory.

- System operators, with their holistic view, are responsible for efficiently scheduling workloads on system resources and potential energy harvesting where resources/systems are massively underutilised. Research into tools for energy-efficiency resource management and scheduling, as well as energy harvesting, is ongoing.

To address the interests and concerns of all these stakeholders, we follow three lines of actions:

- Define methods for application characterisation, towards building a detailed application signature in terms of resource utilisation, performance, and energy consumption.

- Co-design system-level tools and platforms to allow operators to formulate recommendations to optimise overall energy consumption, and further shape green computing policies for the supercomputing systems.

- Co-design frameworks to assess and configure systems procurement and resource provisioning for high-efficiency, low-waste application deployment.

Case study

We performed a strong scaling study on the computational fluid dynamics solver Palabos [L1], which is based on the Lattice Boltzmann Method. Palabos, we believe, serves as a typical use case of a memory-intensive HPC application and through performance/energy analysis can serve as a template for energy usage for other similar HPC applications.

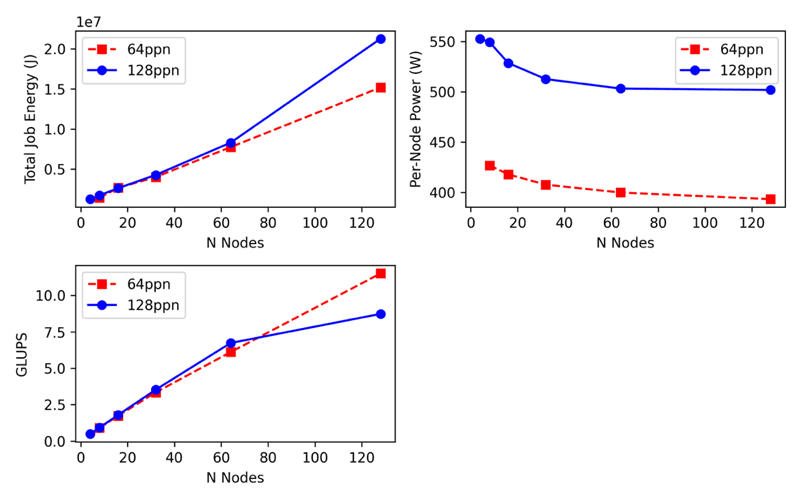

As shown in Figure 1, the application scales linearly with increasing the number of nodes. We observe a flattening of the scaling behaviour on larger node counts and identify that the memory bandwidth limits the code. By lowering the processes per node (ppn) of the application, we could maximise the memory bandwidth available to the application, thus resulting in much lower energy usage. The analysis on Palabos represents the value of including energy as a metric and traditional performance analysis. It explains how the resources of a cluster can be adjusted for an HPC application to maximise performance and minimise energy usage.

Figure 1: Performance and Energy scaling behaviour of the Palabos application.

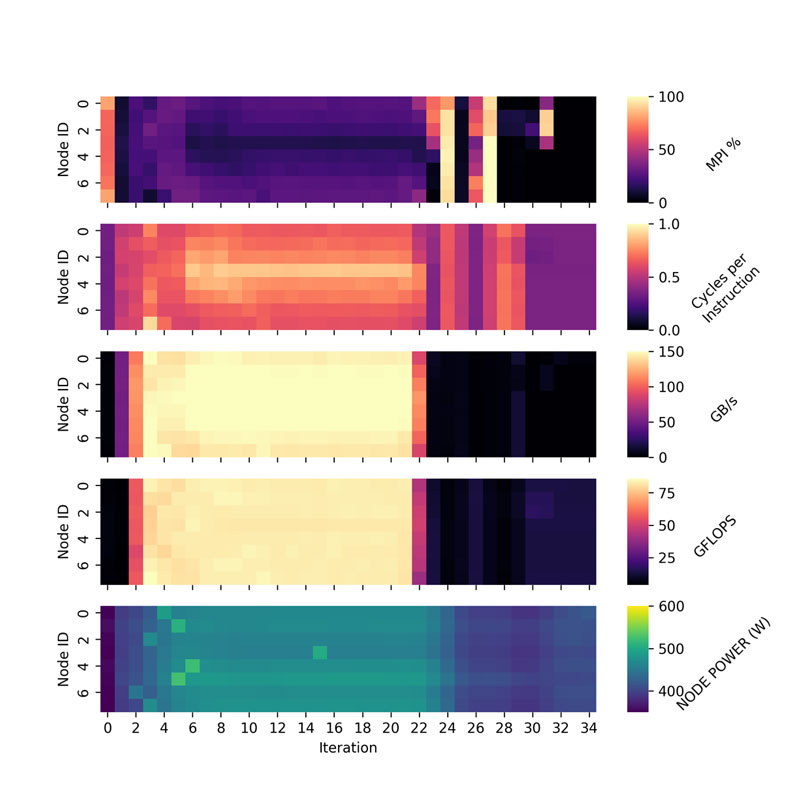

Also, as shown in Figure 2, we are able to get iteration level information of the application using the system level tool Energy Aware Runtime [3]. With this fine-grained information, we can profile the application in order to identify when and where the application is using the most energy. In Figure 2, we identify that Palabos uses the most energy during the “simulation” phase, where the value of GFLOPS is also very high.

Figure 2: Heatmap analysis of Palabos.

The analysis on Palabos represents the value of including energy as a metric and traditional performance analysis. It explains how the resources of a cluster can be adjusted for an HPC application to maximize performance and minimize energy usage.

Finally, to address the scientific software community at large, transparency is necessary to assess the energy footprint. To this end, methods, tools, and metrics are mandatory to determine the operation of supercomputers and HPC centres in terms of energy consumption and environmental impact. Our initiative into energy efficiency aims at zero waste and energy awareness for software in research.

Links:

[L1] https://palabos.unige.ch

[L2] https://www.eas4dc.com

[L3] https://www.surf.nl/en/news/towards-energy-aware-scientific-research-on-snellius-the-dutch-national-supercomputer

[L4] https://www.surf.nl/en/energy-smart-computing

References:

[1] Dutch Data Centers Association, “State of the dutch data centers - annual report”, 2021. https://www.dutchdatacenters.nl/en/publications/annual-report-2021/

[2] European Commission, “A European Green Deal”, https://ec.europa.eu/info/strategy/priorities-2019-2024/european-green-deal_en.

[3] J. Corbalan, L. Brochard, “EAR - Energy Management framework for Supercomputers”. https://www.bsc.es/sites/default/files/public/bscw2/content/software-app/technical-documentation/ear.pdf

Please contact:

Sagar Dolas, SURF, The Netherlands