by Anh Tuan Hoang (SZTAKI) and Zsolt János Viharos (SZTAKI and John von Neumann University)

Human Activity Recognition (HAR) is a prevalent topic in the field of cognitive intelligence, closely related to Human-Centred Artificial Intelligence (HCAI). The emergence of deep learning has brought breakthroughs in many HAR problems. This research proposes a novel search algorithm to determine the optimal deep neural model structure that considers the internal relationships as well as the information content incorporated in the data, and as a result serving with superior capabilities in recognition of various human activity types.

Human-Centred Artificial Intelligence is a rapidly developing and increasingly popular topic that puts humans at the focus of attention when building AI systems. It seeks to preserve human control in a way that meets the needs of artificial intelligence. The applicability of HAR lies in the fact that during any type of movement, the human body generates a characteristic sensor signal, in other words, a pattern, and it can be effectively recognised with the help of combined digital signal processing and deep learning.

The idea of using deeper neural networks and new types of neural layers, such as convolutional layers, is gaining popularity among researchers. These new learning methods automatically form a higher-level representation from the raw sensory dataset. As a result, deep learning provides a more general solution, as it automates the extraction of features, as opposed to classical machine learning approaches, where the features are extracted statically.

An interesting concept is followed by Abid et al. [1] and Wu and Cheng [2] who developed an efficient feature-selection method based on autoencoder. Both studies minimise the cost of reconstruction error based on the selected features; however, the use of different types of deep models and the correlation of internal parameters are not taken into account. Moreover, their success has not yet been validated on sensory signal data. By the concept for internal-relationships exploration inside the data, we can achieve significant superiority.

To explore the internal relationships incorporated in the data, by deep neural architecture search, a modified adaptation of the classic autoencoder model was used, which can determine the appropriate input-output configuration and is generally used to learn efficient data coding in an unsupervised manner (the preliminary research for shallow network was published by Viharos and Monostori [3]). 1D convolutional and 1D transposed layers were used for efficiently processing sensory signal data.

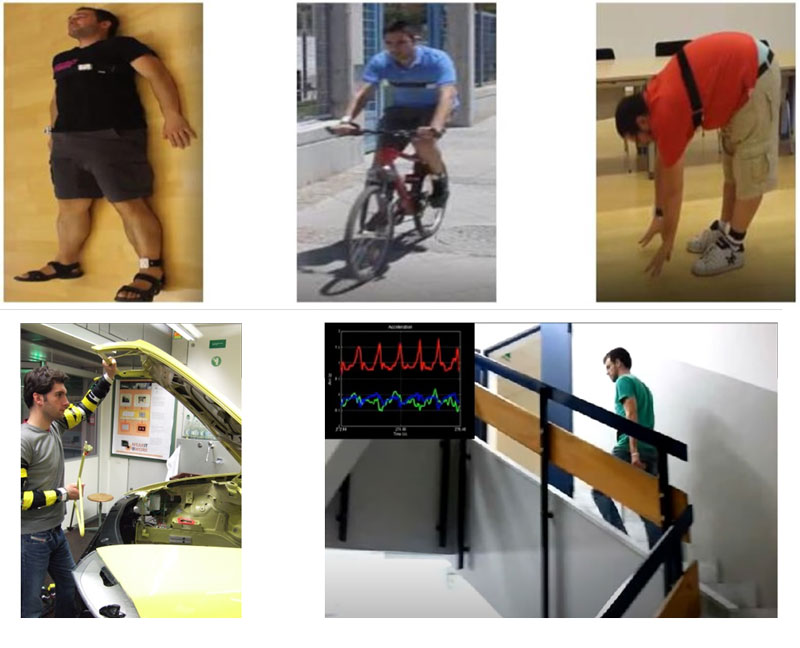

Thanks to the widespread use of modern wearable sensors, more and more public datasets became available to researchers about various human activities. Nowadays, several different datasets can be freely used, including MHealth [L1], Skoda [L2], Smartphones [L3] on which the proposed algorithm was validated (Figure 1).

Figure 1: Example movements and measuring sensors of the human activity recognition datasets. The three pictures on the top represent the MHEALTH dataset, the bottom-left picture shows typical activity of the SKODA dataset (realised during reparation of SKODA cars), and the picture in the bottom-right shows a daily activity of people measured by their hand brought typically in their pockets.

After the sensory data were pre-processed, training was carried out by the proposed novel method, which selects the most significant and descriptive features by exploring the internal dependencies. If the reconstructed data is added to the input, which brings the internal relationships of the data, significant improvement can be achieved. For the evaluation, a shallow convolutional neural network is used as a reference classifier.

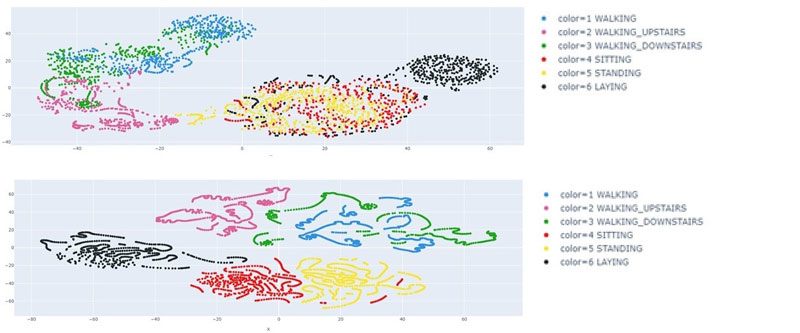

Improvements for all the benchmarking datasets we have chosen were achieved, thereby validating the success of the proposed method. The correctness of the novel algorithm was also validated by visualisation on the Smartphone dataset. For the visualisation, T-SNE dimensionality reduction procedure was used, thereby achieving the possibility of 2D representation (Figure 2).

Figure 2: The T-SNE representation of the learned dependencies on the Smartphone dataset. The classical convolutional neural network (CNN) architecture resulted in the classification results shown in the top picture, while the proposed, novel deep neural architecture search (NAS) based solution results are represented in the bottom part of the picture trained on the same dataset. It can be seen clearly that the proposed algorithm realises a more distinctive, superior modelling solution.

Links:

[L1] http://archive.ics.uci.edu/ml/datasets/mhealth+dataset

[L2] http://har-dataset.org/doku.php?id=wiki:dataset

[L3] https://archive.ics.uci.edu/ml/datasets/human+activity+recognition+using+smartphones

References:

[1] A. Abid, M. F. Balin, J. Y. Zou: “Concrete Autoencoders for Differentiable Feature Selection and Reconstruction”, International Conference on Machine Learning (ICML), 2019.

[2] X. Wu, Q. Cheng: “Fractal Autoencoders for Feature Selection”, AAAI Conference on Artificial Intelligence, 2020.

[3] Zs. J. Viharos, L. Monostori, “Automatic input-output configuration of ANN-based process models and its application in machining”, in Lecture Notes of Artificial Intelligence - Multiple Approaches to Intelligent Systems, Springer, pp. 659-668., 1999.

Please contact:

Zsolt János Viharos, SZTAKI and John von Neumann University, Hungary

László Hoang (Anh Tuan Hoang), SZTAKI, Hungary