by Jauwairia Nasir, Utku Norman, Barbara Bruno and Pierre Dillenbourg (EPFL)

The Project JUSThink has a double goal: (i), to help train the Computational Thinking skills of children with a collaborative, robot-mediated activity, (ii), to acquire insights about how children detect and solve misunderstandings, and what keeps them engaged with a task, the partner or a robot. The result? An abstract reasoning task with a few pedagogical tricks and a basic “robot CEO” that can keep 100 ten-year-olds engaged, and, in turns, frustrated and jubilant!

“Computational thinking (CT) is going to be needed everywhere. And doing it well is going to be a key to success in almost all future careers.” The words of Stephen Wolfram [L1] capture the urgency of the efforts to introduce CT into educational curricula before high school. In a parallel effort, robots are being increasingly used in educational settings around the globe, with some attempts to use robots to help students advance CT skills. However, finding pedagogical designs that can help develop such skills is quite challenging.

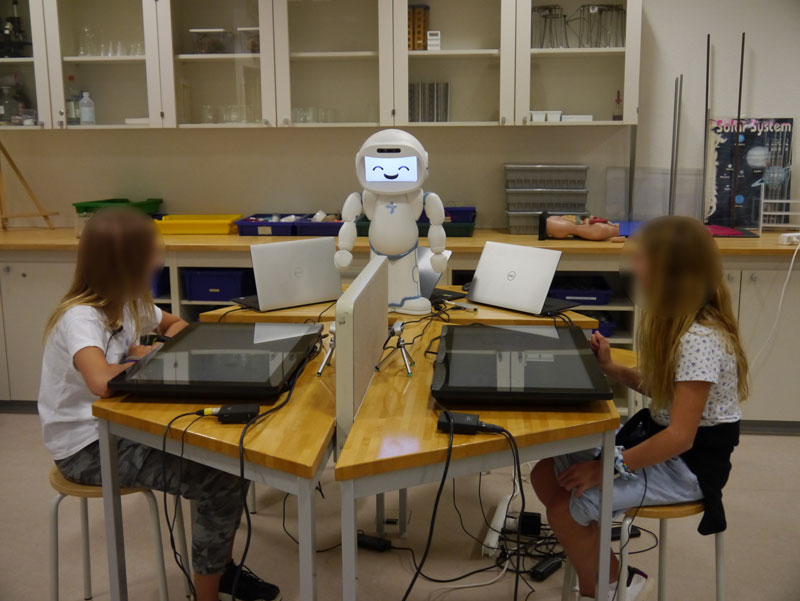

To address the current need, the “JUSThink” project [L2], started in September 2018 at the Computer-Human Interaction in Learning and Instruction (CHILI) Laboratory at École Polytechnique Fédérale de Lausanne (EPFL), aims to improve the computational thinking skills of children by exercising abstract reasoning with and through graphs, where graphs are posed as a way to represent, reason about and solve a problem. JUSThink builds on experience from earlier work [1] and aims to foster children’s understanding of abstract graphs through a collaborative problem-solving task, in a setup consisting of a QTrobot and touch screens as input devices (shown in Figure 1). JUSThink is being developed as part of EU’s Horizon 2020 ANIMATAS Innovative Training Network that aims to improve the social interaction capabilities of robots for learning and education [L3].

In this activity, the design is scaffolded towards and inspired by pedagogical and learning theories of collaboration and constructivism whereas the role of the robot (an informed CEO, who still needs help) is partially inspired by the protégé effect. Furthermore, the design of the activity is motivated to surface cues relevant to (i) learners’ engagement with the task at hand, their partner and the robot, and (ii) mutual understanding and misunderstandings between the learners. Given the importance of the aforementioned cues towards the pedagogical goal of the activity, the ultimate goal of JUSThink is to enable the design of a robot capable of understanding, monitoring and, if necessary, intervening.

For this purpose, we brought the setup to multiple international schools in Switzerland over a span of two weeks, where around 100 children (age: M = 10.5, SD = 1.36; median = 10) participated in pairs to have a one hour interaction with the setup.

Children in pairs are welcomed to a setup as in Figure 1 by the QTrobot as the CEO of a gold-mining company looking to hire. After the robot’s welcome, the children introduce themselves to the robot and individually solve a brief test. Then, the robot tells them the goal of the activity, which is to build railroads connecting all the company’s gold mines, distributed in the mountains of Switzerland, by spending as little money as possible as the company has a limited budget (hence, solving a “Minimum Spanning Tree” problem), and illustrates how they can interact with the setup. After the signal “Are you ready? Let’s go!”, the children are assigned two roles, with one of them drawing and erasing tracks while the other reasons about the moves based on the costs shown, and the children swap the roles every two moves. Once they agree on a solution, children submit it to the robot CEO, that, in addition to giving them basic guidance and encouragement, tells them how close their solution is to the best possible solution. The collaborative turn-taking design, with a barrier between the children, each of whom always only having partial information, is inspired by two hypotheses: (i) partial information scaffolds towards collaboration when there is a shared goal, and (ii) justifying past and future moves verbally to self/partner based on cost can lead to an initial grounding for abstract reasoning.

Figure 1: QTrobot welcomes children to the activity.

The children, surprisingly, without exception, reported really enjoying the activity and expressed interest in integrating it within school activities even though they thought it was challenging. In addition to this, they really liked their robot CEO and found it to be knowledgeable, helpful and friendly.

For us as researchers, what now? To help improve the learning outcomes in this context of human-human-robot interaction, this user study and the multi-modal data collection is just the beginning. We aim to extract relevant behaviours from the data generated (logs from the apps, facial and lateral videos, audio) and relate it to learning to explore models of engagement and mutual modelling in collaboration with Sorbonne University and Télécom Paris in France. Eventually, the generated models would lead to adapting the robot behaviour effectively in real time to advance learning as well as have appropriate human-human-robot interaction in educational contexts so that the next time we go to schools, we are one step closer to “Educational Technologies for the Future”.

Moral of the story: Children are more accepting of challenging educational activities than we think.

Links:

[L1] https://kwz.me/hEM

[L2] https://kwz.me/hER

[L3] http://www.animatas.eu/

References:

[1] J. Nasir, U. Norman, et al.: “Robot Analytics: What Do Human-Robot Interaction Traces Tell Us About Learning?,” IEEE RO-MAN 2019 Conference, 2019.

Please contact:

Jauwairia Nasir

EPFL, Switzerland

Utku Norman

EPFL, Switzerland