by Christoph Posch

At AIT, the Austrian Institute of Technology, we have developed the next generation of biomimetic, frame-free vision and image sensors. Based on the seminal research on neuromorphic electronics at CalTech in the 1990’s and further developed in collaboration with the Institute of Neuroinformatics at the ETH Zürich, ATIS is the first optical sensor to combine several functionalities of the biological ‘where’- and ‘what’-systems of the human visual system. Following its biological role model, this sensor processes the visual information in a massively parallel fashion using energy-efficient, asynchronous event-driven methods.

Neuromorphic Systems

Biological sensory and information processing systems still outperform the most powerful computers in routine functions involving perception, sensing and motor control, and are, most strikingly, orders of magnitude more energy-efficient. The reasons for the superior performance of biological systems are still only partly understood, but it is apparent that the hardware architecture and the style of computation in nervous systems are fundamentally different from those applied in artificial synchronous information processing.

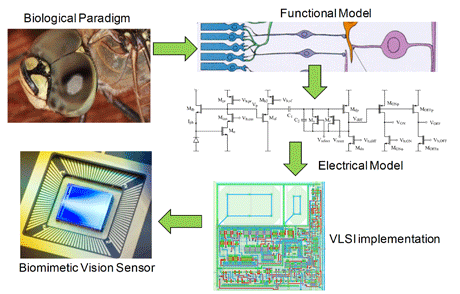

In the late 1980s it was demonstrated that modern semiconductor VLSI technology is able to implement electrical circuits that mimic biological neural functions and allow the construction of neuromorphic systems, which – like the biological systems they model – process information using energy-efficient, asynchronous, event-driven methods. The greatest successes of neuromorphic systems to date have been in the emulation of peripheral sensory transduction, most notably in vision.

Learning from Nature

Conventional image sensors acquire the visual information time-quantized at a predetermined frame rate. Each frame carries the information from all pixels, regardless of whether or not this information has changed since the last frame had been acquired. If future artificial vision systems are to succeed in demanding applications such as autonomous robot navigation, high-speed motor control and visual feedback loops, they must exploit the power of the asynchronous, frame-free, biomimetic approach. This means leaving behind the unnatural limitation of frames: Systems must be driven and controlled by events happening within the scene in view, and not by artificially created timing and control signals that have no relation whatsoever to the source of the visual information: the world. Translating the frameless paradigm of biological vision to artificial imaging systems implies that control over visual information acquisition is no longer being imposed externally to an array of pixels but the decision making is transferred to the single pixel that handles its own information individually. The notion of a frame has completely disappeared and is replaced by a spatio-temporal volume of luminance-driven, asynchronous events.

In studying biological vision, it has been discovered that there exist two types of retina-brain pathways in the human visual system: The transient Magno-cellular pathway and the sustained Parvo-cellular pathway. The Magno-system is sensitive to changes and movements. Its biological role is demonstrated for instance, by our ability to detect dangers that arise in the peripheral vision. It is referred to as the "where" system. Once an object is detected, the detailed visual information (spatial details, color, etc.) seems to be carried primarily by the Parvo-system which is known as the "what" system. Practically all conventional image sensors can be attributed to the "what" system side; they completely neglect the dynamic information provided by the natural scene and perceived in nature by the ‘where’-system.

Figure 1. Biology guides vision and image sensor development.

Biomimetic Vision Sensors

Modelling of the Magno-cellular pathway has recently led to the first generation of real-world deployable biomimetic, asynchronous vision devices, known as ‘dynamic vision sensor’ (DVS), which respond autonomously to temporal contrast in the scene. Computer vision systems based on these sensors already show remarkable performance in various applications.

Taking further inspiration from the fundamental concepts of biological vision, it is clear that a combination of the ‘where’ and ‘what’-system functionalities could have the potential to open up a whole new level of sensor functionality and performance, and inspire new approaches to image data processing. A first step towards this goal is ATIS (Asynchronous, Time-based Image Sensor), the first visual sensor that combines functionalities of the biological ‘where’ and ‘what’ systems by employing multiple bio-inspired approaches like pulse modulation imaging, temporal contrast dynamic vision and asynchronous, 'spike'-based neural information encoding and data communication.

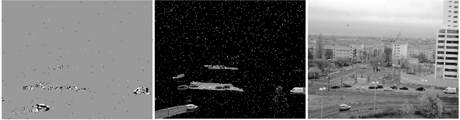

In each ATIS pixel, a change detector initiates a pixel-individual exposure, the measurement of a new illumination value, only after a change has been detected in the field of view of the respective pixel. Such a pixel independently and asynchronously requests output bandwidth only when it has a new illumination value to communicate. In addition, the asynchronous operation avoids the time quantization of frame-based acquisition. In standard terms, this operation principle results in highly efficient video compression through temporal redundancy suppression at the focal-plane while the asynchronous, time-based exposure encoding yields exceptional dynamic range, improved signal-to-noise ratio and high temporal resolution.

Figure 2. Temporal contrast change events, change-triggered exposure data starting from an empty image, and continuous-time video stream. The event-based video contains the same information as standard frame-based video at data rates reduced by orders of magnitude.

The target applications for this device include high-speed dynamic machine vision systems that take advantage of the real-time change information and frame-free continuous-time image data, as well as low-data rate video as required for instance, by wireless or TCP based applications. Wide dynamic range, high quality, high-temporal resolution, imaging and video for scientific tasks are at the other end of the application spectrum.

Please contact:

Christoph Posch

AIT – Austrian Institute of Technology

Tel: +43 50550 4116

E-mail: