by Tamás Cserteg, Gábor Erdős and Gergely Horváth (MTA SZTAKI)

Multiple, linked research projects on the topic of human-robot collaboration have been carried out in MTA Sztaki with the aim of developing ergonomic, gesture driven, robot control methods. Although robots are already used in many different fields, the next generation of robots will need to work in shared workplaces with human operators complementing and not substituting their work. The implementation of such shared workplaces raises new problems, such as security of the human operator and defining simple and unambiguous communication interface between the human operator and the robot. To overcome these problems a detailed, up-to-date model, i.e., a digital twin, has been created.

To achieve our goal of defining a communication interface between human and robot, we first needed a high level method to monitor the actual state of the robot as well as the present operator(s), and to this end we created the digital twin of our workcell, which consisted of a Microsoft Kinect sensor, version 2, a UR5 robot, and a human operator. For the twin to be usable, it not only has to be loaded with real-time data, it must also be properly calibrated. Once calibrated, the data from the digital twin (e.g., the position of the operator in the past) can be used for human gesture recognition, which can be used to control the robot.

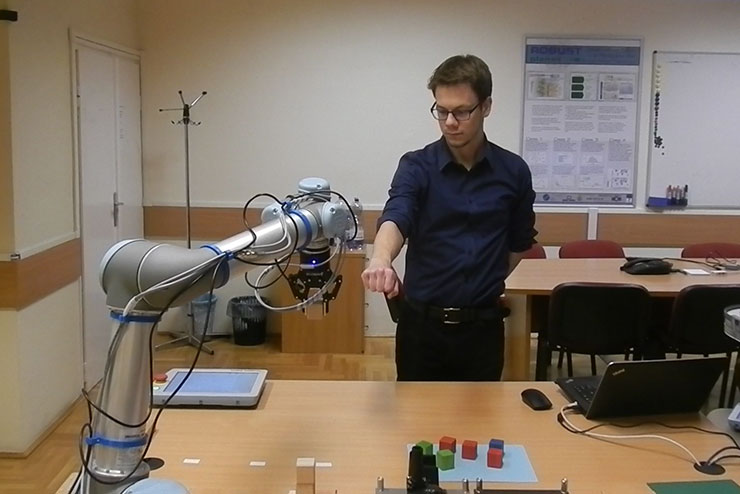

Once we’d created and calibrated the digital twin we were able to perform a simple hand-over scenario between human and robot, implementing gesture communication, the details of which are summarised below.

Digital Twin

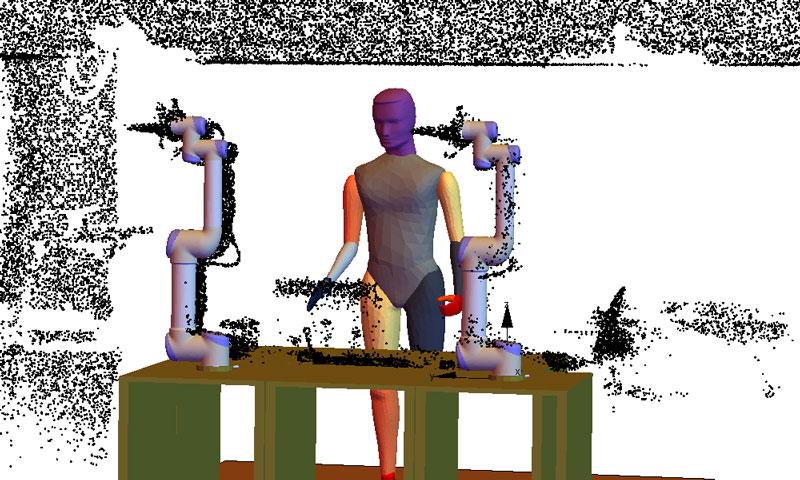

The base of system is the digital twin, which is a digital representation of a workcell, containing as much information about it as possible. It includes the current states of the various equipment (robots, production or warehouse units etc), sensor data and even related database connections. Figure 1 shows a partial visualisation of the digital twin: a digital model extended with measured point cloud data.

Figure 1: Virtual model extended with measured data.

Figure 2: Hand over in action.

Near real-time updating of the digital twin is crucial, so the digital twin should provide the same information as the real world workcell would. The digital twin can be used as a centralised source of information, or it can be used to boost the amount of available information. It can be also be used to implement virtual sensors by applying the necessary calculation on the digital twin. It is also possible to check the potential outcome of a given command, by running simulations on the digital twin. Furthermore, if the simulation is fast enough, it may also be used as feedback in the control loop. The digital twin of the workcell, shared by humans and robots, is modelled as a linkage, which is capable of capturing the geometric and kinematic relations of the static and moving objects.

Calibration

Usually the calculation of joint and link dimension errors of robotic arms is considered as calibration, which corresponds to precise pick and move scenarios. On the other hand, calculation of transformation between the local reference frame and some pre-defined global reference frame can also be viewed as calibration. Throughout our work and in the referenced paper we consider the latter definition. This will give an accurate virtual model—the digital twin itself—that contains the current, updated state of the workcell, capable of serving as an input for any kind of workcell control. Calibration tasks can be daunting, time consuming and labour intensive. In our work (presented in [1]) we aimed for a solution that was both as modular and automated as much as possible. Automatisation is key: it both improves the precision of the calibration by eliminating human errors, and liberates the human operator from a time-consuming task. On the other hand, modularity is vital when gluing together various modules.

Virtual Sensors

In the implemented use-case in [2] the operator controlling the robots—the one closest to it—has to be chosen. To choose the right person, the distance of every human operator from both robots has to be measured. It would be a challenging task to make these measurements in the real world with actual sensors, so virtual sensors are applied that perform the task at hand. With the aid of the virtual sensors we are able to make the distance measurements in the virtual model, find the closest operator for every robot and find their ID, which can be sent back to the processing algorithm. The use-case explained demonstrates the possibility of integrating virtual sensors in the digital twin of a workcell. While distance measurement was used to find authorised personnel by its position to operate the robots, virtual sensors have the potential for diverse utilisation, for example the virtual model can be extended with colour data, making it possible to recognise QR-code stamps or even faces of the operators, or it is possible to use virtual sensors to measure the velocity or acceleration of the robots, without actually encumbering potentially low capacity equipment.

Gesture Recognition

Gesture recognition is the mathematical formulation and capturing of human motion, using a computational device. From the global coordinates of the human joints (returned by the official Microsoft Kinect SDK) the positions of the bones constructing the human operator are calculated, as the posture of the bones is the basis of the recognition. The angular position of bones in relation to other bones, and global angular positions of bones compared to the global axis, form the basis of the clustering gesture recognition.

Gesture Communication

During any kind of cooperation be it human-human, human-robot, or even robot-robot, the participating entities have to be able to communicate with each other in a way that is unambiguous. When a human is participating in the interaction it is also important to make the communication as comfortable and natural as possible. As two-thirds of human communication is non-verbal, special robot movement patterns can be used to communicate its state to the worker. With the gesture control this bilateral information transition is what we call gesture communication, and special patterns have been created to signal multiple state changes. In paper [3] we implemented a robot-human tool handover scenario, where the operator can take the tool at any arbitrary position. The robot follows the hand of the operator and distinguishes between movement and intention to take the tool from its gripper. Gesture communication was used to signal the robot’s state to the human operator.

The research presented in this paper has been supported by the GINOP-2.3.2-15-2016-00002 grant on an ”Industry 4.0 research and innovation center of excellence”, the EU H2020 Grant SYMBIO-TIC No. 637107 and the Hungarian Scientific Research Fund (OTKA), Grant No. 113038.

References:

[1] G. Horváth, G Erdős: “Point cloud based robot cell calibration”, CIRP Annals 2017.

[2] G. Horváth, G Erdős: “Gesture Control of Cyber Physical Systems”, Procedia CIRP 2017.

[3] T. Cserteg, G. Erdős, G. Horváth: “Assisted assembly process by gesture controlled robots”, Procedia CIRP 2018.

Please contact:

Gergely Horváth,

MTA SZTAKI, Hungary

+36 1 279 6181