by Steven Pemberton

It is now more than 20 years since the World Wide Web started. In the early days of the web, the rallying cry was “If you have information it should be on the web!” There are -- possibly apocryphal -- tales of companies not understanding this, and refusing initially to put their information online. One mail-order company is reported to have refused to put their catalogue online, because “they didn't want just anybody getting hold of it”! Luckily that sort of attitude has now mostly disappeared, and people are eager to make their information available on the web, not least of all because it increases the potential readership, and is largely cheaper than other methods of promulgation.

However, nowadays, with the coming of the semantic web and linked open data, the call has become “if you have data it should be on the web!”: if you have information it should be machine-readable as well as human-readable, in order to make it useful in more contexts. And, alas, once again, there seems to be a reluctance amongst those who own the data to make it available. One good, and definitely not apocryphal, example is the report that the Amsterdam Fire Service needed access to the data of where roadworks were being carried out, in order to allow fire engines to get to the scene of fires as quickly as possible by avoiding routes that were blocked. You would think that that the owners of such data would release it as fast as possible, but in fact the Fire Service had the greatest of trouble getting hold of it. [1]

But releasing data is not just about saving lives. In fact, one of the fascinating aspects of open data is that it enables applications that weren't thought of until the data was released, such as improving maps [2], mapping crime, or visualising where public transport is in real time [3].

The term “Open Data” often goes hand in hand with the term “Big Data”, where large data sets get released allowing for analysis, but the Cinderella of the Open Data ball is Small Data, small amounts of data, nonetheless possibly essential, that are too small to be put in some database or online dataset to be put to use.

Typically such data is on websites. It may be the announcement of a conference, or the review of a film, a restaurant, or some merchandise in a blog. Considered alone, not super-useful data, but as the British say “Many a mickle makes a muckle”: many small things brought together make something large. If all the reviews of the film could be aggregated, together they would be much more useful.

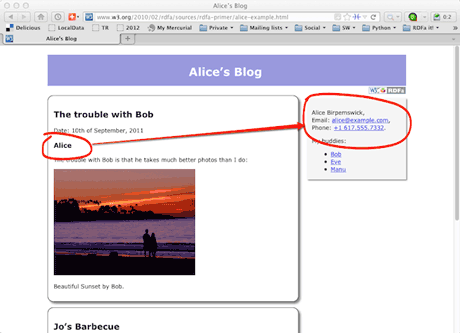

The fairy godmother that can bring this small data to the ball is a W3C standard called RDFa [4] (ERCIM is the European host of W3C), the second edition of which (version 1.1) was recently released. RDFa is a light-weight layer of attributes that can be used on HTML, XHTML, or any other XML format, such as Open Document Format (ODF) files [5], that makes text that is otherwise intended to be human-readable also machine-readable. For instance, the markup

<div typeof="event:Vevent">

<h3 property="event:summary">WWW 2014</h3>

<p property="event:description">23rd International World Wide Web Conference</p>

<p>To be held from

<span property="event:dtstart" content="2014-04-07">7th April 2014</span>

until <span property="event:dtend" content="2014-04-11">11th April</span>,

in <span property="event:location">Seoul, Korea</span>.</p>

</div>

marks up an event, and allows an RDFa-aware processor or scraper to extract the essential information about the event in the form of RDF triples, thus making them available for wider use. It could also allow a smart browser, or a suitable plugin for a browser, to extract the data and make it easier for the user to deal with it (for instance by adding it to an agenda).

RDFa is already widely used. For example, Google and Yahoo already accept certain RDFa markup for aggregating reviews, online retailers such as Best Buy and Tesco use it for marking up products, and the London Gazette, the daily publication of the British Government uses it to deliver data [6].

Links:

More information about RDF: http://rdfa.info/

[1] http://blog.okfn.org/2010/10/25/getting-started-with-governmental-linked-open-data/

[2] http://vimeo.com/78423677

[3] http://traintimes.org.uk/map/tube/

[4] http://www.w3.org/TR/xhtml-rdfa/

[5] http://docs.oasis-open.org/office/v1.2/OpenDocument-v1.2.html

[6] http://www.london-gazette.co.uk/reuse

Please contact:

Steven Pemberton, CWI, The Netherlands

E-mail: