by Jan Gassen and Elmar Gerhards-Padilla

Today’s computer systems face a vast array of severe threats that are posed by automated attacks performed by malicious software as well as manual attacks by individual humans. These attacks not only differ in their technical implementation but may also be location-dependent. Consequentially, it is necessary to join the information from heterogeneous and distributed attack sensors in order to acquire comprehensive information on current ongoing cyber attacks.

The arms race between cyber attackers and countering organizations has spawned various tools that are even capable of detecting previously unknown attacks and analyzing them in detail. Owing to the heterogeneity of possible cyber attacks, various tools have been developed to deal with certain classes of attacks, for instance, “server honeypots” masquerade as regular production systems waiting to be probed and attacked directly over the network. These systems are therefore able to detect network based attacks against vulnerable services but cannot detect attacks that are performed against client applications, for example by using malicious documents. Moreover, even within one class of attack, different detection tools may be needed since existing tools are generally only able to detect a subset of possible attacks that may occur within a particular class. Finally, the information gathered by individual tools can be further enhanced by a set of passive tools generating additional details about the attacker’s origin or to examine the attacker’s operating system by fingerprinting the observed network connection.

In order to allow a comprehensive detection of cyber attacks, the Fraunhofer Institute for Communication, Information Processing and Ergonomics FKIE in conjunction with the University of Bonn is developing a distributed architecture for heterogeneous attack sensors in different geographic locations and networks. The major goal of the project is to create a scalable architecture with large amounts of sensor locations that can be easily extended by additional as well as new types of sensors.

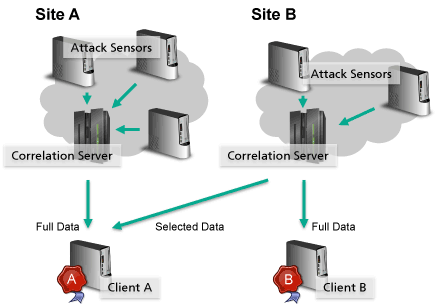

Creating a scalable architecture primarily requires distributing the network load as well as the required computational effort to different resources. Therefore, the entire set of applied sensors is broken down into different independent sites, whereas every site contains a particular set of sensors (Figure 1). Each site is responsible for generating extensive information about attacks observed at the according network or geographical location. The core component of a single site is a central correlation server that receives information from the different independent sensors and automatically aligns information that belongs to the same incident. During this process, redundant or duplicate information is deleted before the resulting data is streamed to connected clients in unified JSON format. These clients are used to further process the collected information and can be located independently from the connected sites.

Figure 1: Overview of the distributed architecture for distributed and heterogeneous attack sensors.

As a result of the preprocessing within the individual sites, a connected client only receives relevant and pre-parsed data that can be directly used for further processing. To store the received data stream, the client uses the distributed and schema-less database MongoDB. This allows deployment of the database on multiple resources to achieve the performance as needed for individual purposes. Moreover, the use of JSON data and a schema-less database design allows almost arbitrary data to be stored without the need to adjust the database, the client or the correlation server. Therefore, new sensors can be added to existing sites and new sites with different sensors can be added to a certain client without any adjustments.

The more sites that are deployed within different networks or geographic locations, the more comprehensive the information gathered about ongoing attacks. It is therefore desirable for organizations to share data with each other, thus reducing the need for their own resources and according maintenance costs. On the other hand, involved organizations may only be willing to share a particular subset of their registered data. To this end, the correlation server within each site allows certificate-based authentication, whereby the certificates are also used to specify the information to be sent to the according client.

To be able to manage and maintain administrated sensors within one or more sites from a single point, a controlling application has been developed that can be deployed on every resource involved. On each resource, the controlling application observes running processes and is able to perform simple countermeasures in case of detected errors. If the controlling application is not able to resolve the observed issue automatically, the administrator is notified automatically to take further action. Furthermore, all controlling applications participate in a peer-to-peer network by using JGroups, allowing the administrator to join the network and issue commands to all participating resources.

A remaining challenge in observing different attacks from different locations is the increasing amount of data. While the data can be handled automatically by distributed databases, it remains hard for human analysts to extract particularly relevant data. Therefore, future work is focused on automatic clustering of incoming data in order to group similar attacks. Thus, newly registered attacks can be directly compared with similar known attacks, simplifying their identification and reducing the workload of human analysts.

Link:

http://www.fkie.fraunhofer.de/en/research-areas/cyber-defense.html

References:

[1] "Current Botnet-Techniques and Countermeasures", J. Gassen, E. Gerhards-Padilla, P. Martini. PIK - Praxis der Informationsverarbeitung und Kommunikation. Volume 35, Issue 1, April 2012.

[2] "PDF Scrutinizer: Detecting JavaScript-based Attacks in PDF Documents", F. Schmitt, J. Gassen and E. Gerhards-Padilla. To appear in: Proceedings of the 10th Annual Conference on Privacy, Security and Trust (PST). Paris, France, July 2012.

Please contact:

Jan Gassen, Elmar Gerhards-Padilla

Fraunhofer Institute for Communication, Information Processing and Ergonomics FKIE, Germany

E-mail: