by Emanuele Salerno

A research team at the Signal and Image Processing Lab of ISTI-CNR has been involved in studying data analysis algorithms for the European Space Agency’s Planck Surveyor Satellite since 1999. The huge amount of data on the cosmic microwave background radiation provided by the Planck sensors requires very efficient analysis algorithms and high-performance computing facilities. The CNR group has proposed some of the source separation procedures that are now operational at the Planck data processing centre in Trieste, Italy.

Satellite-borne radiometric observations for cosmic microwave background studies are normally presented as sets of multichannel, all-sky maps where the background signal is contaminated by a number of galactic and extragalactic sources that are dominant in some frequency regions. When the measurements are particularly sensitive, the task of separating all the radiation components in the maps is particularly important. This is the case with the ESA cosmological mission known as the Planck Surveyor Satellite, whose nine channels, centred on frequencies between 30 and 857 GHz, provide huge all-sky maps with unequalled accuracy and angular resolution (see also ERCIM News 49 p. 14).

The Planck collaboration includes hundreds of scientists and dozens of institutes from all over the world. The satellite carries a telescope, provided by the Danish National Space Centre, with two instruments in its focal plane: a three-channel radiometer called the Low Frequency Instrument, under the responsibility of the Italian National Institute of Astrophysics in Bologna, and a six-channel bolometric sensor called the High Frequency Instrument, under the responsibility of the French Institute for Space Astrophysics in Orsay. The spacecraft was sent into orbit by an Ariane launcher on 14 May 2009, from the European spaceport in Kourou, French Guiana. The mission has now completed its first coverage of the microwave sky; the end of operations is expected in 2011.

Studying the small anisotropies of the cosmic background is a fundamental step in the understanding of many aspects of the origin and the evolution of the universe. As the source separation problem is of crucial importance for the scientific goals of Planck, it has been studied intensely by many groups. The Planck group at ISTI-CNR in Pisa has been working on it since 1999, when the project’s first plenary meeting was held in Capri, Italy.

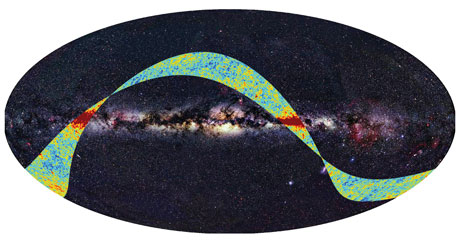

Figure 1: The first microwave sky data released by the Planck mission, superimposed to an all-sky map at optical wavelengths. The bright equatorial area represents the intense radiation from our Milky Way galaxy. The microwave data are mapped through a colour scale that indicates the deviations of the radiation temperature from the average background value of 2.726 K (red is hotter and blue is colder). Date: 17 Sep 2009 Satellite: Planck. Copyright: ESA, LFI & HFI Consortia (Planck), Background image: Axel Mellinger.

Our group started by adopting blind source separation techniques, motivated by the lack of an accurate data model and by a provisional assumption of mutual independence between the interfering radiations. In successive years, more specific techniques have been developed, taking into account pieces of information neglected by purely blind methods. In particular, the data model was enriched by possible dependencies between sources along with their intrinsic spatial structures. The non-stationarity of the mixing operator and the instrumental noise have also been considered. Finally, as this is always important in scientific data analysis, accurate methods for error estimation have been devised. The computational techniques adopted are diverse, from quick, fixed-point optimization strategies to accurate but lengthy fully Bayesian approaches. Since a single Planck map can have around 5x107 pixels, even very fast algorithms need supercomputing facilities to perform a task on the complete data set. This is why the Planck data-processing centres in Trieste and Paris are equipped with very fast and powerful hardware, and only the fastest component separation algorithms have been implemented in the routine data analysis pipelines.

Nevertheless, the more sophisticated Bayesian methods are still being studied, since they potentially offer advantages in terms of flexibility, capability of including prior knowledge, and accuracy in results and error estimates. Happily, the computational complexity of some of the new versions we are developing is decreasing, and we expect that further refinements will make them suitable to be applied to large amounts of data. Even if these algorithms are not ready to be used routinely before the end of the mission, their features can enable the research teams to perform accurate analyses on at least part of the complete data set.

The group in Pisa has been collaborating with several other Planck groups, mainly at the International School for Advanced Studies in Trieste, the Astronomical Observatory in Padova, Italy, and the Cantabria Institute of Physics in Santander, Spain. Some of the stochastic algorithms that we propose have been designed in collaboration with the ERCIM partners at Trinity College Dublin, in the framework of the ERCIM-led MUSCLE European Network of Excellence (see also ERCIM News 71 p. 6).

Links:

Main Planck portal: http://www.esa.int/SPECIALS/Planck/index.html

Planck science homepage: http://www.rssd.esa.int/index.php?project=PLANCK

Please contact:

Emanuele Salerno

ISTI-CNR

Tel: +39 050 315 3137

E-mail: