by Alessandro Curioni and Ray Walshe

Recently, when speaking at Teratec, the conference on High Performance Computing (HPC) in Paris, the European commissioner for the Information Society, Viviane Reding said "Supercomputers are the 'cathedrals' of modern science, essential tools to push forward the frontiers of research at the service of Europe's prosperity and growth". This statement underlines a strategic emphasis being placed on High Performance Computing across the European Union and investment in selected HPC centres aims to develop many key areas of research and industry.

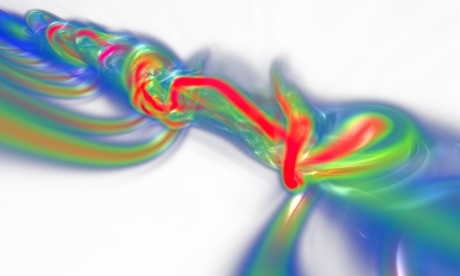

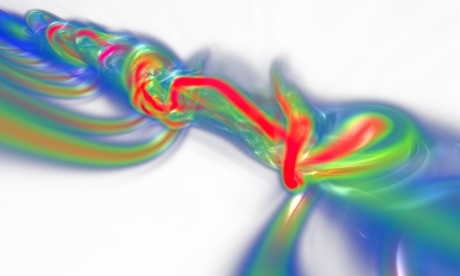

Massively parallel simulations of aircraft wake instabilities. An aircraft wake consists of powerful long-lasting trailing vortices. This potential hazard imposes safety distances, and thus limits airport traffic. Thousands-of-processors high-resolution simulations can accurately capture fast growing instabilities which perturb the vortices and can therefore accelerate their decay. The figure (also on the cover) shows the volume rendering of vorticity in the case of a fast-growing instability. Secondary vortices generated by the stabilizer reconnect with the wing ones and result in a disturbance that propagates along and inside the vortex cores. Credits: Philippe Chatelain, Michael Bergdorf, Diego Rossinelli, Petros Koumoutsakos, ETH Zurich, Switzerland; Alessandro Curioni, Wanda Andreoni, IBM Zurich Research Laboratory, Switzerland. Acknowledgments: IBM T.J. Watson Research Center, Swiss Supercomputing Center.