Computer systems architecture has been a catalyst in this amazing development. Until recently, catalysing innovations in hardware would improve software with little or no involvement from users. Even before it hit the market, a new processor would simply run faster software than had previously been written. Unfortunately, this 'free ride' came to an end due to technological barriers. While the complexity of hardware quickly increased to achieve further performance improvement, power consumption also began to increase at an exponential rate, rendering computing systems less energy-efficient and less reliable. Furthermore, exponentially increasing hardware complexity ceased to achieve exponentially improving performance, showing diminishing returns instead.

To address these challenges, the industry turned to processors with multiple cores (known as multi-core or many-core) and off-loaded most of the responsibility for sustaining performance improvement to software. To exploit multiple cores, software needs to be parallelized, a task which has traditionally been considered hard, time-consuming, error-prone and feasible only for specialized scientific application domains.

The emergence of multi-core processors as a de facto standard brings us to the following interesting situation. A processor sitting in a Sony PlayStation 3, a set-top box that we can buy for around 400 Euros, has nine cores and can perform as many as 200 billion operations per second. Ten years ago, this level of performance would have been achieved only by the fastest computers in the world. To make use of this however, a user needs to invest tremendous effort in porting, parallelizing and specializing code for the particular processor of the PlayStation, the Cell Broadband Engine (Cell/BE).

The real-life experience of the author suggests that it takes over three months for an advanced PhD student with ample background in parallel computing to port a reasonably sized (a few Klines) computational biology algorithm on this platform. The effort, and perhaps the associated code development methodology, are most likely not portable to other (single-core or multi-core) processors. It is even questionable whether the effort is portable across algorithms and applications running on the same processor.

Researchers at the Computer Architecture and VLSI Systems Laboratory (CARV) of FORTH-ICS are tackling the software productivity crisis that has emerged since the introduction of multi-core processors in the market. A significant component of this research is the development of programming environments (runtime systems, languages, compilers and hardware/software interfaces) and tools for processors with multiple heterogeneous cores. Some of these have a general-purpose architecture (eg superscalar pipelined), while others have a specialized architecture (eg vector processing units) designed to accelerate specific algorithms and application kernels. Examples of these processors, besides the Cell/BE, are the AMD Fusion processor and the Intel EXO architecture. CARV is also heavily involved in developing next-generation multi-core processors through its participation in the SARC project (Scalable computer ARChitecture).

Our research on software environments for programming current and future heterogeneous multi-core processors focuses on providing a unified framework for exploiting the multiple layers and forms of parallelism available in these processors. We aim at achieving this goal while asking the programmer to use a single 'expression' of parallelism in the program. In object-oriented terms, we are seeking mechanisms for exploiting polymorphism in the expression of parallelism by having programmers use a single notion of algorithmic 'work unit' and map this notion to multiple (overlapping or non-overlapping) physical implementations of parallel execution units. We have developed several research prototypes to support such a 'hardware-independent' framework for parallel programming.

These prototypes include:

- An event-driven task execution and scheduling model which achieves better coordinated scheduling of heterogeneous cores, improves the capabilities of software in exploiting simultaneously fine-grained and coarse-grained parallelism and controls dynamically and transparently the execution cores allocated to program components.

- Models of multi-grained (layered) parallelism for systems with many heterogeneous multi-core processors each with many heterogeneous cores. These models drastically prune the design space for mapping software to multi-core computer systems, which is exponential and exhibits non-linear performance effects, when concurrency changes at different layers of the system.

- A runtime program auto-tuning tool, for online empirical searching of program decompositions and mappings to parallel architectures, to optimize performance based on dynamic execution feedback.

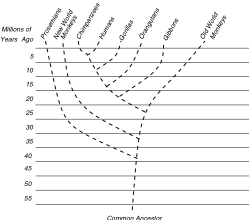

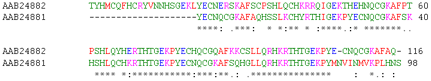

We have deployed the aforementioned components on Sony PlayStation 3 and several other platforms to model and accelerate with little effort several computational biology algorithms, including phylogenetic tree inference and multiple sequence alignment. Figure 1 shows some experimental results, indicating that using the same code basis and our acceleration tools, we are able to use a PlayStation 3 and achieve almost an order of magnitude higher performance than a high-end 2.8 GHz Intel CoreDuo processor on a MacBook. The PlayStation's performance per Euro can be up to two orders of magnitude better than that of the MacBook.

Links:

http://www.top500.org

http://www.sarc-ip.org

Please contact:

Dimitrios S. Nikolopoulos

FORTH-ICS, Greece

E-mail: dsn![]() ics.forth.gr

ics.forth.gr