by Kees van der Sluijs and Geert-Jan Houben

While it is generally desirable that huge cultural collections should be opened up to the public, the paucity of available metadata makes this a difficult task. Researchers from the Eindhoven University of Technology in the Netherlands have built a Web application framework for opening up digital versions of these multimedia documents with help of the public. This leads to a win-win situation for users and content providers.

The Regional Historic Centre Eindhoven (RHCe) governs all historical information related to the cities in the region around Eindhoven in the Netherlands. The information is gathered from local government agencies or private persons and groups. This includes not only enormous collections of birth, marriage and death certificates, but also posters, drawings, pictures, videos and city council minutes. One of its goals is to make these collections available to the general public. However, for the videos and pictures very little metadata is available, which makes indexing this data for navigation or searching very hard. Moreover, most of the fragile material is stored in vaults and is thus physically inaccessible to the public.

Through the use of simple tagging techniques, users could collaboratively help to provide metadata for previously uncharted collections of multimedia documents. By applying semantic and linguistic techniques these user tags can be enriched with well-structured ontological information provided by professionals. This gives content providers access to high-quality metadata with little effort, eg, for archival purposes. In return, the rich metadata in combination with ontological sources offers users more powerful navigation and search facilities, allowing them to get a grip on the 'sometimes very large' multimedia collections and find what they were really looking for.

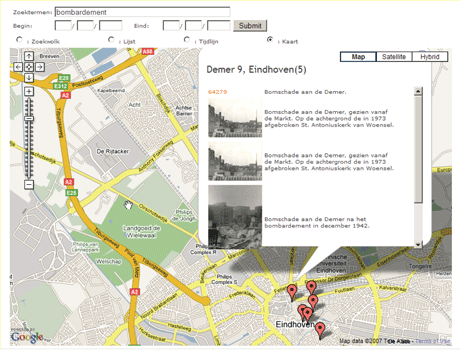

Web engineering researchers from the Eindhoven University of Technology took on the challenge of building a Web application framework that would open up digital versions of these multimedia documents to the public in a meaningful way. A prototype framework, called Chi, provides a faceted browser view over the different data sets. This means that the data can be navigated or searched via a number of different dimensions. Photo and video collections share three dimensions within scenes: time, location and a set of keywords that are associated with a scene. In Chi these dimensions are described by a specialist ontology. Professionals adapt the metadata schema of every collection by aligning notions of these dimensions with those in the ontologies. Because of these aligned ontologies users can homogeneously navigate heterogeneous collections of multimedia.

For every dimension Chi has a separate visualization in the user interface. For time there is a timeline, for location Google Maps is used, and for the keywords we present a graph which represents the relatedness of terms. In this way, results sharing a characteristic in one dimension can be grouped in these interfaces. This representation means that for a given picture or video, other pictures and videos that share a characteristic can be found.

The RHCe institute is aiming for good metadata descriptions of all its archived data, but its few domain specialists have only limited time and the collections are huge. For them, the biggest benefit from Chi is getting metadata from the users. However, users do not want to fill in large forms to provide well-structured data about the scenes: many will find this too complicated or too time-consuming. Therefore, Chi uses several tricks to keep things simple while still obtaining this information. First it uses an ordinary tagging mechanism by which users can enter keywords or small sentence fragments, called tags, to describe a scene. Then via several matching mechanisms that take syntax, semantics and user reinforcement into account, users are presented with alternatives to their input tags. These can be clicked on; while users are not obliged to click on an alternative, if they do, this is registered. If users often select a specific alternative for some tag, we assume there is some kind of relationship between the original and the selected tags, and we use this relationship to improve tagging suggestions and user queries.

Users can see the tags of others and can agree or disagree with a tag by clicking a thumbs-up or a thumbs-down icon. If many people agree on a certain tag for a certain scene we assume the probability is high that this tag is a correct description for a scene. Finally these tags are presented to the RHCe domain specialist who provides a final judgment. In effect, this information is reused to identify those users who often present good tags and those who don't. The good taggers are given more weight to calculate if a tag is useful.

Chi is currently being finalized in a second version and prepared for more extensive user testing. The first version of Chi has already been tested by end-users, and their responses and feedback were very positive and encouraging. This has motivated RHCe to continue with the researchers along this path of applying semantic technology.

Links:

http://wwwis.win.tue.nl/~ksluijs/

http://wwwis.win.tue.nl/~hera/

http://www.icwe2007.org

Please contact:

Kees van der Sluijs and Geert-Jan Houben

Eindhoven University of Technology, The Netherlands

Tel: +31 40 247 5154

E-mail: k.a.m.sluijs![]() tue.nl, G.J.Houben

tue.nl, G.J.Houben![]() tue.nl

tue.nl