by Vicente Matellán, Francisco J. Rodríguez –Lera and Jesús Balsa (University of Léon)

The robotics industry is set to suffer the same problems the computer industry has been facing in recent decades. This is particularly disturbing for critical tasks such as those performed by surgical, or military robots, but it is also challenging for the ostensibly benign household robots such as vacuum cleaners and tele-conference bots. What would happen if these robots were hacked? At RIASC (Research Institute in Applied Science in CyberSecurity) we are working on tools and countermeasures against cyber attacks in cyber-physical systems.

Robots are set to face similar problems to those faced by the computer industry when the internet spread 30 years ago. Suddenly, systems whose security had not been considered are vulnerable. Industrial robots have been working for decades, physically protected by walls and cybernetically by their isolation. But their protection is no longer assured: manufacturing facilities are now connected to distributed control networks to enhance productivity and to reduce operational costs and their robots are suffering the same problems as any other cyber-physical system.

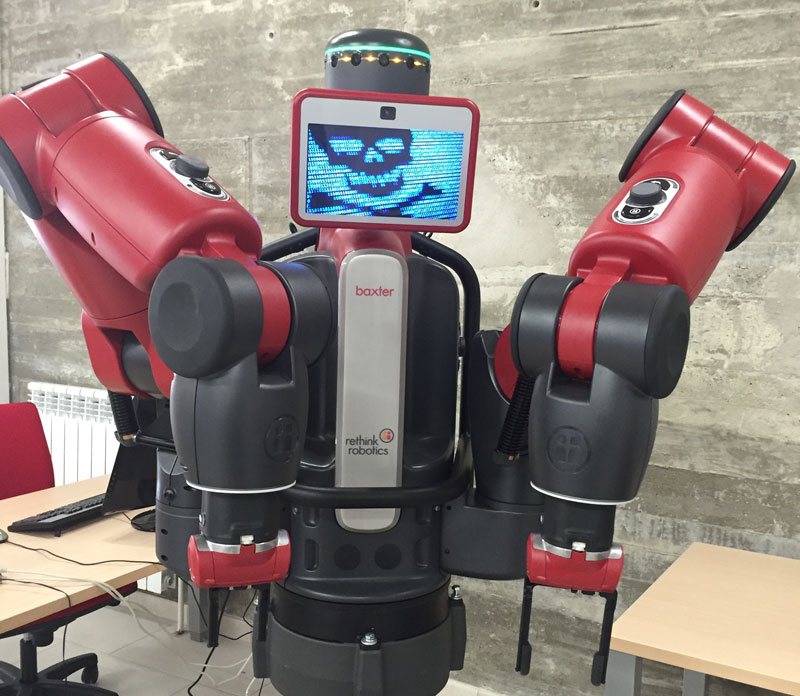

Figure 1: Hacked Baxter robot.

Manufacturers of robots for critical applications were the first to express concern. Defence and security robots, which, by definition are exposed to hostile attacks, were the first robots to be hardened. But some other applications, such as medical robots, are equally critical.

Currently, medical robots are usually tele-operated systems, which exposes them to communications threats. Applied to tele-surgical robots these problems can be grouped into four categories:

- Privacy violations: information gathered by the robot and transmitted to the operator is intercepted. For instance, images or data about a patient’s condition can be obtained.

- Action modifications: actions commanded by the operator are changed.

- Feedback modifications: sensor information (haptic, video, etc.) that the robot is sending to the operator is changed.

- Combination of actions and feedback: the robot has been hijacked.

At RIASC [L1] we think that the real challenge will be the widespread use of service robotics. Household robots are becoming increasingly common (autonomous vacuum cleaners, tele-conference bots, assistants) and as Bill Gates observed [1] this is just the beginning. The software controlling these robots needs to be secured, and in many cases the software was not designed with security problems in mind.

Many service robots, for instance, are based on Robotic Operating System (ROS). ROS started as a research system, but currently most manufacturers of commercial platforms use ROS as the de facto standard for building robotic software. Well-known and commercially successful robots like Baxter (in Figure 1) are programmed using ROS and almost all robotic start-ups are based on it owing both to the tremendous amount of software available for basic functions and to its flexibility.

ROS [L2] is a distributed architecture where ‘nodes’, programs in ROS terminology, ‘publish’ the results of their computations. For instance, a typical configuration could be made up by a node that receives sensor data (is ‘subscribed’ to that data) and publishes information about recognized objects in a ‘topic’. Another node could publish the estimated position of the robot in a different topic. And a third one could ‘subscribe’ to these two topics to look for a particular object required by the user.

Messages in this set-up are sent using TCPROS the ROS transport layer which uses standard TCP/IP sockets. This protocol uses plain-text communications, unprotected TCP ports, and exchanges unencrypted data. A malicious malware could easily interfere with these communications, read private messages or even supersede nodes. Security was not a requirement for ROS at its conception, but now it should be. New ROS 2 [L3] is being developed using Data Distribution Service middleware to address robotics cyber vulnerabilities.

The situation is potentially even worse in cloud robotics. There are several companies whose robots use services in the cloud, which means that readings from robot sensors (images, personal data, etc.) are sent over the internet, processed in the company servers and sent back to the robot. This is nothing new - it happens every day in our smartphone apps – but a smartphone is under our control; it does not move, it cannot go to our bedroom by itself and take a picture, it cannot drive our car.

Talking about cars, self-driving cars are currently one of the best-known instance of “autonomous service robots” and a good example of cloud robotics. Many cars are currently being updated remotely [L4] and most cars use on-line services, from entertainment to localization or emergency services.

Risk assessment should be the first step in a broad effort by the robotics community to increase cybersecurity in service robotics. Marketing and research are focused on developing useful robots, but cybersecurity has to be a requirement: robots need cyber safety. Hacking robots and taking control of them may endanger not only the robot, but humans and property in the vicinity.

Links:

[L1] http://riasc.unileon.es

[L2] http://ros.org

[L3] http://design.ros2.org/

[L4] https://www.teslamotors.com/blog/summon-your-tesla-your-phone

References:

[1] B. Gates: “A robot in every home”, Scientific American, pp. 4-11, Jan. 2007,

http://www.scientificamerican.com/article.cfm?id=a-robot-in-every-home

[2] M. Quigley, et al.: “ROS: An open-source Robot Operating System”, in ICRA Workshop on Open Source Software, 2009, https://www.willowgarage.com/sites/default/files/icraoss09-ROS.pdf

Please contact:

Vicente Matellán

Grupo de Robótica, Escuela de Ingenierías Industrial e Informática, Universidad de León, Spain

+34 987 291 743