Everything expressed by humans in whatever form, arouses emotions in every one, who witnesses that expression. Those emotions are dependent on the witness and vary over time. For instance, an expression like "I'm now going to smoke a cigar in my office" uttered today brings about other emotions than 10 years ago. To really preserve (digital multimedia) expressions, the different kinds of emotions it arouses have to be preserved.

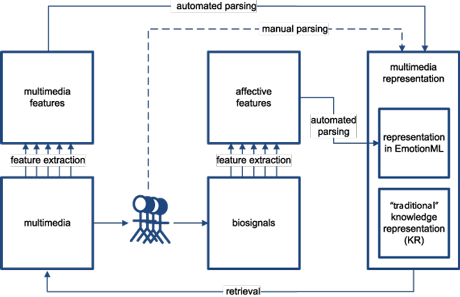

Traditionally, digital preservation (DP) is approached from engineering rather than a user perspective. Consequently, definitions such as these have been proposed: "Digital preservation combines policies, strategies and actions that ensure access to digital content over time" (ALA, 2007). This article approaches DP of multimedia from an end-user perspective. More specifically, we suggest that users' most important association with virtually all media be taken into account and, as such, that we introduce a new dimension to DP: emotion. This article discusses how this new dimension can be integrated in traditional frameworks as used for DP; see also Figure 1.

Let us start with denoting our perspective on knowledge representation (KR), which is founded on its traditional definition. In line with AIM (1993), we argue that KR can play five distinct roles, each crucial. A KR can be (i) a substitute for the object itself; (ii) a set of ontological commitments; (iii) a fragmentary theory of intelligent reasoning; (iv) a medium for pragmatically efficient computation; and (v) a medium of human expression. This perspective on KR is already more user-centred than the ALA (2007) definition of DP.

Figure 1: A scheme for the process of user-centred digital preservation of multimedia. The process starts with either an annotation of the user, which enables digital preservation, or a user's query that requires digital preserved multimedia. These users can be both the same or different persons. The boxes denote information sources. Arrows denote either core (solid lines) or optional (dashed line) processes. Further, please note that this scheme is simplified; eg fusion and classification processes are omitted.

Through traditional KR, a range of information can be captured, including multimedia; eg SMIL (2008). Regrettably, we suffer from an information overload and multimedia retrieval techniques are not as good as sometimes thought, relying on the extraction of low level features. While more complex compounds of these low-level features enable the definition of high-level features (eg objects), the mapping of such features, either low- or high-level, on semantics is still an unsolved problem. Consequently, (semi-automatic) annotation of multimedia data is still the best solution.

As stated, we want to take multimedia KR one step further. As is now generally acknowledged by the community of artificial intelligence, emotions play a crucial role in understanding human intelligence, creating artificial intelligence, and the interaction between entities (eg human and computer). We adopt this notion and, in addition, state that it is of importance to include emotions when aiming for DP, as it is a primary form of human expression and consequently human communication; cf AIM (1993). For example, laypersons can benefit from an enriched representation of an abstract painting that describes its emotional expression.

Recently, the W3C launched the first working draft of the Emotion Markup Language (EmotionML). EmotionML is "a 'plug-in' language suitable for use in three different areas: (1) manual annotation of data; (2) automatic recognition of emotion-related states from user behaviour; and (3) generation of emotion-related system behaviour." Each of these three areas is of interest for DP, as we will explain next.

EmotionML gives concrete possibilities for capturing the emotional communication of digital art; manual (or semi-automatic) annotations can preserve a much richer representation than can be obtained solely through traditional KR means. The manual and semi-automatic annotations of the emotional expression of art is, to some extent, currently possible; see also Figure 1.

Automatic recognition of emotions - 'affective computing' - goes well beyond the scope of traditional DP. Nevertheless, it can be exploited to automatically annotate DP; see also Figure 1. Although not yet mature and struggling with various problems, affective computing is currently moving into its subsequent stage of development (Guid, 2009). Through speech signals, computer vision, movement recordings and biosignals, users' affective states can be determined (to a certain extent). EmotionML can help in automatically capturing and preserving the different emotional experiences, and through that various perspectives on the emotional expression.

The further DP develops, the more important the area of 'emotion-related system behaviour' will become. With DP, not only the storage of the KRs is crucial: access to the system (including its user interface) and the retrieval of the KR is of the utmost importance. In addition, non-specialists will increasingly need to be able to access the systems, to fully experience the "replay" from a wanted perspective. Affective computing can aid this interaction, as is generally acknowledged in the usability and human-computer interaction communities.

Let us consider the example of an abstract painting. The emotion will depend on the painting, the viewer, and the context (eg time). EmotionML can help in capturing the emotional expression of the painting; eg through (low-level) multimedia feature extraction. In further iterations, EmotionML can support capturing and preserving the emotional experiences through affective computing and, thereby, make emotional preservation automatic and incorporating different perspectives. However, an open issue remains in the user's perspective of the painting's expression; possible awareness for this perspective can be supplied using emotion-related system behaviour.

Taken together, fully automated generation of KRs of multimedia is beyond science's current reach. Nevertheless, we introduce a new dimension: emotion. The same holds for this new dimension as for multimedia analysis in general: semi-automatically is the best we can do. Nevertheless, developments in multimedia analysis, affective computing and in understanding humans continue to gain in speed. So, it is a matter of time before enriched digital preservation of multimedia, including its affective annotation, will evolve from theory to practice.

Links:

ALA (2007): http://www.ala.org/ala/mgrps/divs/alcts/resources/preserv/defdigpres0408.cfm

AIM (1993): http://groups.csail.mit.edu/medg/ftp/psz/k-rep.html

EmotionML (2009): http://www.w3.org/TR/emotionml/

SMIL (2008): http://www.w3.org/AudioVideo/

Guid (2009): http://emotion-research.net/acii/acii2009/ guidelines-for-affective-signal-processing-from-lab-to-life/

Please contact:

Egon L. van den Broek

Human Media Interaction, University of Twente, The Netherlands

Karakter, Radboud University Medical Center Nijmegen, The Netherlands

Tel: +43 1 956 1530; +31 24 355 8120

E-mail:

http://www.human-centeredcomputing.com