by Roland Kwitt

Anomaly detection should be an integral part of every computer security system, since it is the only way to tackle the problem of identifying novel and modified attacks. Our work focuses on machine-learning approaches for anomaly detection and tries to deal with the problems that come along with it.

Since the number of reported security incidents caused by network attacks is dramatically increasing every year, corresponding attack detection systems have become a necessity in every company's network security system.

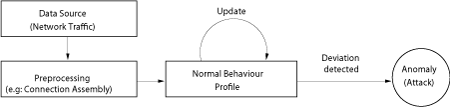

Generally, there are two approaches to tackle the detection problem. Most commercial intrusion detection systems employ some kind of signature-matching algorithms to detect malicious activities. In intrusion detection terminology this is called "misuse detection". Generally, such systems have very low false alarm rates and work very well in the event that the corresponding attack signatures are present. However, the last point leads directly to the potential drawback of misuse detection: missing signatures inevitably lead to undetected attacks! Here, the second approach, termed anomaly detection, is in evidence. Anomaly detection maps normal behaviour to a baseline profile and tries to detect deviations. Thus, no a priori knowledge about attacks is needed any longer and the problem of missing attack signatures does not exist.

Our work deals with the applicability of machine-learning approaches, specifically Self-Organising Maps and neural networks in the field of network anomaly detection. By presenting a set of normal (problem-specific) feature vectors to a Self-Organising Map, it can learn the specific characteristics of these vectors and provide a distance measure of how well a new input vector fits into the class of normal data. In contrast to other machine-learning approaches, Self-Organizing Maps do not require a teacher who determines the favoured output.

Considering the aforementioned feature vectors, the vector elements strongly depend on the preferred layer of detection. That means, if anomaly detection is carried out at the connection level for instance, connection specific features, for example packet counts, connection duration, etc., have to be collected. However, if we require detection at a higher level, for example at application layer, another set of features will be necessary. Generally we can state, that the more discriminative power a feature possesses, the better detection results we get.

In case we use a neural network architecture for anomaly detection, such as a Feed-Forward Multi-Layer Perceptron (MLP), the training procedure for normal behaviour might not be apparent at first sight, since such networks generally require instances of both normal and abnormal data in the training phase. However, anomaly detection assumes that we only have instances of benign, normal data. This problem can be successfully solved by training the neural network with a set of random vectors, in the first step, pretending that all instances are anomalous. In the second step, the neural network is trained with the set of normal instances. In the ideal case, the net is then able to recognise recurrent benign behaviour as normal and classify potential attacks as abnormal. It is clear that we have to make the assumption that malicious behaviour is potentially abnormal.

One big problem that comes along with anomaly detectors is the lack of normal data in the training phase of a system. Most anomaly detection systems will miss an attack if the training data contains instances of that attack. However, no system administrator will waste his time, cleansing network traces from embedded attacks in order to produce adequate training data. What we need are detectors that are tolerant with regards to contaminated training data instances and still produce accurate results. Again, machine-learning with Self-Organising Maps can remedy that problem to a certain extent. Unless the amount of contamination is too high (which depends on the SOM parameters and the amount of normal data), no map regions will evolve which accept anomalous data.

Future work on this topic will include research on the feature construction process, which we found to be one of the most critical parts of an anomaly detector. Furthermore, we plan to evaluate machine-learning approaches that incorporate time as an important factor. Last but not least, we have to find a solution to the open problem of "concept drift", which denotes the fact, that normal behaviour changes over time, leading to high false positives (normal behaviour classified as anomalous) in most detection systems.

Please contact:

Roland Kwitt, Salzburg Research, Austria

Tel: +43 664 1266900

E-mail: rkwitt![]() salzburgresearch.at

salzburgresearch.at