by Maria Koskinopoulou and Panos Trahanias

Imitation learning, which involves a robot observing and copying a human demonstrator, empowers a robot to “learn” new actions, and thus improve robot performance in tasks that require human-robot collaboration (HRC). We formulate a latent space representation of observed behaviors, and associate this representation with the corresponding one for target robotic behaviors. Effectively, a mapping of observed to reproduced actions is devised that abstracts action variations and differences between the human and robotic manipulators.

Learning new behaviors enables robots to expand their repertoire of actions and effectively learn new tasks. This can be formulated as a problem of learning mappings between world states and actions. Such mappings, called policies, enable a robot to select actions based on the current world state. The development of such policies by hand is often very challenging, so machine learning techniques have been proposed to cope with the inherent complexity of the task. One distinct approach to policy learning is “Learning from Demonstration” (LfD), also referred in the literature as Imitation Learning or Programming by Demonstration (PbD) [1].

Our research in the LfD field is directed towards furnishing robots with task-learning capabilities, given a dataset of different demonstrations. Task learning is treated generically in our approach, in that we attempt to abstract from the differences present in individual demonstrations and summarize the learned task in an executable robotic behavior. An appropriate latent space representation is employed that effectively encodes demonstrated actions. A transformation from the actor’s latent space to the robot’s latent space dictates the action to be executed by the robotic system. Learned actions are in turn used in human-robot collaboration (HRC) scenarios to facilitate fluent cooperation between two agents – the human and the robotic system [2].

We conducted experiments with an actual robotic arm in scenarios involving reaching movements and a set of HRC tasks. The tasks comprised a human opening a closed compartment and removing an object from it, then the robot was expected to perform the correct action to properly close the compartment. Types of compartments tested included: drawers, closets and cabinets. The respective actions that the robotic systems should perform consisted of “forward pushing” (closing a drawer), “sideward reaching” (closing an open closet door) and “reaching from the top” (closing a cabinet). Furthermore, the robots were trained with two additional actions, namely: “hello-waving” and “sideward pointing”. Those additional actions were included in order to furnish the robots with a richer repertoire of actions and hence operate more realistically in the HRC scenarios.

In these experiments, the robotic arm is expected to reproduce a demonstrated act, taught in different demo-sessions. The robotic arm should be able to reproduce the action regardless of the trajectory to reach the goal, the hand configuration, the trajectory plan of a reaching scenario or the reaching velocity, etc. The end effect of the learning process is the accomplishment of task-reproduction by the robot. Our experiments are developed using the robotic arm JACO designed by Kinova Robotics [L1], and have also been tested on the humanoid robot NAO by Aldebaran [L2].

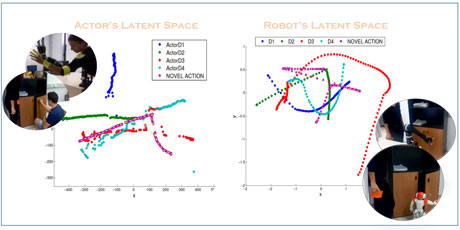

Actual demonstrations may be performed in different ways. In our study, we recorded RGB-D videos of a human performing the respective motion act. From each video, we extract the {x,y,z} coordinates of each joint, previously marked with prominent (yellow) markers. By tracking three joints of the demonstrator, we form a 9D representation of the observed world. The derived trajectories of human motion acts are subsequently represented in a way that is readily amenable to mapping in the manipulator’s space. The Gaussian Process Latent Variable Model (GP-LVM) representation is employed in order to derive an intermediate space, termed latent space, which abstracts the demonstrated behavior [3]. The latent space features only the essential characteristics of the demonstration space: those that have to be transferred to the robot-execution space, in order to learn the task. Effectively, the mapping of the observed space to the actuator’s space is accomplished via an intermediate mapping in the latent spaces (Figure 1).

Figure 1: The formatted actor’s and robot’s latent spaces, as derived from the respective learnt human motion acts, result in the final robotic motion reproduction.

Our initial results regarding the latent space representation are particularly encouraging. We performed many demonstrations of left-handed reaching and pushing movements. Interestingly, the resulting representations assume overlapping trajectories that are distinguishable, facilitating compact encoding of the behavior. We are currently working on deriving the analogous representation for actions performed by the robotic arm, as well as associating the two representations via learning techniques.

In summary, we have set the ground for research work in Robotic LfD and have pursued initial work towards building the latent representation of demonstrated actions. In this context, we’re aiming at capitalizing on the latent space representation to come up with robust mappings of the observed space to the robot–actuator’s space. Preliminary results indicate that the adopted representation in LfD tasks is appropriate.

Links:

[L1] http://kinovarobotics.com/products/jaco-robotics/

[L2] https://www.aldebaran.com/en/humanoid-robot/nao-robot

References:

[1] S. Schaal, A. Ijspeert, A.Billard: “Computational approaches to motor learning by imitation” Philosophical Transactions of the Royal Society of London. Series B, Biological Sciences, 537–547, 2003.

[2] M. Koskinopoulou, S. Piperakis, P. Trahanias: “Learning from Demonstration Facilitates Human-Robot Collaborative Task Execution”, in Proc. 11th ACM/IEEE Intl. Conf. Human-Robot Interaction (HRI 2016), New Zealand, March 2016.

[3] N.D. Lawrence: “Gaussian Process Latent Variable Models for Visualisation of High Dimensional Data”, Advances in Neural Information Processing Systems 16, 329–336, 2004.

Please contact:

Maria Koskinopoulou and Panos Trahanias

ICS-FORTH,, Greece

E-mail: {mkosk, trahania}@ics.forth.gr