by Francesco Banterle, Franco Alberto Cardillo, and Luigi Malomo

Augmented Reality (AR) - the augmentation of a physical world’s view with digital media - has recently gained popularity thanks to the increasing computational power and diffusion of mobile devices such as tablets, and smartphones. These developments allow many practical applications of AR technology, especially in the cultural heritage domain. LecceAR is an advanced app that allows tourists to view rich 3D reconstructions of cultural heritage sites within the city of Lecce in Italy.

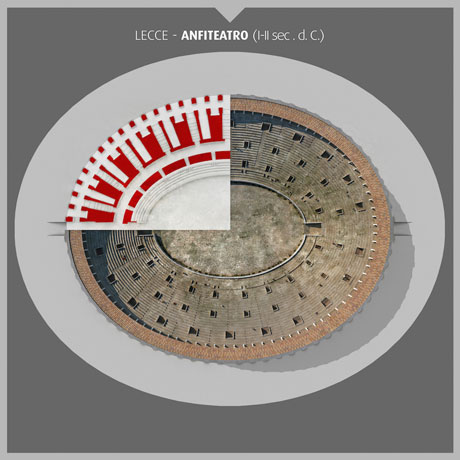

LecceAR is an iOS app for markerless AR that will be exhibited at the MUST museum in Lecce, Italy. The app shows a rich 3D reconstruction of the Lecce Roman amphitheatre, which is only partially unearthed (see Figure 1). The use of state-of-the-art algorithms in computer graphics and computer vision allows an ancient theatre to be viewed and explored in real-time.

LecceAR is the result of a joint collaboration between different institutes of the Italian National Research Council (CNR). In fact, it was developed by the Visual Computing Laboratory (ISTI-CNR), NeMIS (ISTI-CNR), and IBAM-CNR, as a deliverable for the Italian project “DiCeT: Living Lab di Cultura e Tecnologia” (PON04a2_D). This project began in early 2013 and has been showcased, including LecceAR, at the MUST museum in Lecce during an exhibition in September 2015.

Figure 1: The amphitheatre’s current state.

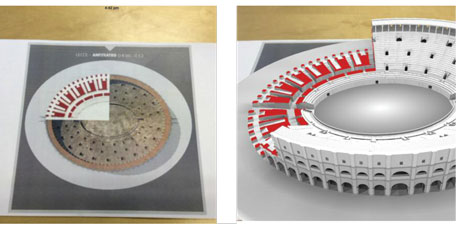

Figure 2: The used target in the app.

Figure 3: Screenshots of the app running: left: The app just before augmentation of the viewed target image. Right: the app with the 3D reconstructed model on top of the target image.

Although commercial AR frameworks exist and provide compelling prices, they usually have low quality rendering engines. For example, they may have limits in the number of triangles to be rendered for a 3D object, and on customization of the final visualization. These are key issues especially in the cultural heritage domain, which requires large 3D models that are typically the output of a 3D scanning campaign. Therefore, we opted to develop a from-scratch app to have enough flexibility and the capability to render large and complex 3D models.

LecceAR was developed using standard computer vision and graphics libraries; i.e. OpenCV and OpenGL. The app comprises two modules: a matching and tracking module, and a renderer.

The first module, ‘MaTrack’, processes frames coming from the device’s camera in order to establish whether or not they contain a known target image. If a frame contains a target, the module computes a geometric transformation mapping the target onto the video frame, and initializes the tracker which stabilises the transformation. Further-more, the tracker is able to keep the alignment between the virtual and the physical worlds even when the target becomes only partially visible. Many AR apps recognize only synthetic images, but our app will be part of a museum exhibition, where “aesthetics” constraints are crucial for the target image used in the exhibition. Therefore, the target used in the current implementation, shown in Figure 2, is a standard picture.

The second module, a renderer, is a proprietary component for rendering virtual objects, called Viewer3D. This is an OpenGL|ES 2.0 real-time renderer which was developed for the iOS platform using the VCG library. The renderer, whose setup requires only a few lines of code, can be encapsulated inside a UIView. In this way, the renderer is very handy, because a UIView is the basic iOS widget for visualizing graphics on a screen. Once the renderer is initialized, 3D models can be rendered by loading them, assigning a shader, and automatically converting the transformation of the previous module into an OpenGL matrix. Viewer3D supports different file formats, including the PLY and OBJ formats that are the de-facto standards for 3D scanned models and 3D modelling packages used in the CH domain. Moreover, our renderer allows different attributes to be defined over the vertices of a 3D model, to encode normals, texture coordinates, colours, ambient occlusion, etc. This increases the flexibility of the system in coping with different rendering and shading needs. In terms of performance, our renderer can achieve up to 60 fps while rendering a 3D model composed of 2M triangles, with a texture and using a Phong lighting shader on an iPad Air 1st generation and an iPhone 5S. This is achieved without the need of streaming from the flash memory. An example of the rendering in action for the visualization of the Lecce Roman amphitheatre is shown in Figure 3.

The next iteration of LecceAR will be focused on enabling the app to recognize and track thousands of images by improving the similarity search. Moreover, we would like to extend the system to work on non-planar scenes, i.e using the 3D metric of the physical world directly.

We hope that LecceAR provides tourists with a useful app for visualizing cultural heritage sites as they were at the peak of their magnificence, such as the Lecce Roman amphitheatre in the 2nd century AD.

Links:

LecceAR Official website: http://vcg.isti.cnr.it/LecceAR/

NeMIS-MIR: http://nemis.isti.cnr.it/groups/multimedia-information-retrieval

Visual Computing Laboratory: http://vcg.isti.cnr.it/

IBAM: http://www.ibam.cnr.it/en/

VCG Library: http://vcg.isti.cnr.it/vcglib/

Reference:

[1] F. Banterle, F.A. Cardillo, L. Malomo, F. Gabellone, G. Amato, R. Scopigno. “LecceAR: An Augmented Reality App”, in Digital Presentation and Preservation of Cultural and Scientific Heritage (DiPP), September 2015, Veliko Tarnovo, Bulgaria.

Please contact:

Giuseppe Amato, Roberto Scopigno

ISTI-CNR, Italy

E-mail: